Can 16GB RAM Run an AI Agent? Running OpenClaw in a macOS Sandbox

Table of Contents

Recently, local AI Agent projects have been heating up.

Especially after the release of the M4 Mac Mini, many developers realized for the first time that a machine the size of a palm can already run a complete AI workflow locally.

But once you start tinkering, most people quickly hit a wall: 16GB of RAM is not as plenty as imagined.

When the model, Docker, context cache, browser, IDE, LSP, file scanning, and shell toolchain all run together, the system quickly starts swapping. Subsequently, inference slows down, the Agent becomes sluggish, and the entire experience rapidly degrades from a “sense of the future” to a “fan stress test.”

So this project wasn’t initially about security.

Its starting point was actually quite realistic:

How to run OpenClaw stably on a 16GB Mac Mini?

But as I continued, I gradually discovered that performance issues and security issues in local Agents are often the same problem.

Because the real issue isn’t just “how much memory the model takes,” but whether the Agent Runtime can be bounded: resource boundaries, file boundaries, execution boundaries, and network boundaries are essentially answering the same question:

What exactly can this Agent use, and what should it not touch?

Thus, this project was born: OpenClaw Sandbox macOS

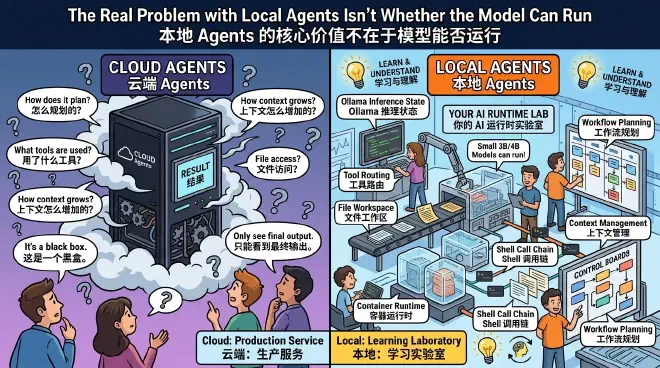

The Real Problem with Local Agents Isn’t Whether the Model Can Run #

When many people first encounter AI Agents, all they see is a chat box.

But the truly complex parts are hidden behind it.

How does the Agent plan tasks?

How are Tool Calls executed?

Why does the Context keep expanding?

How does the model read files, call the shell, and modify code?

These things are hard to truly understand if you only use cloud-based Agents. Because most cloud Agents are essentially black-box systems; developers can only see the final result and find it difficult to observe what happens inside the Runtime.

Local Agents are different.

They allow you to see the entire execution chain directly:

- Ollama’s inference state

- The growth process of the Context

- File changes in the Workspace

- Shell call chains

- Tool Routing

- Container Runtime behavior

- File system read/write processes

In a sense, a local Agent is more like an AI Runtime laboratory that you can take apart.

For developers in the learning stage, this is very important.

Even 3B, 4B, or Q4 quantized models are enough to understand core issues like Agent Workflow, Context Management, Tool Calling, Runtime Scheduling, and Sandbox Isolation.

Cloud models are more like production services.

Local models are more like laboratories.

If the goal is to understand how an AI Agent actually operates, the first step might not be to call the strongest API, but to actually get a local Agent running.

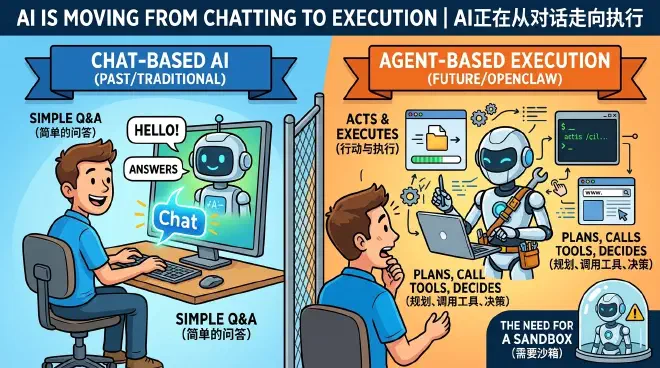

AI is Moving from Chatting to Execution #

The most significant change in large models over the past few years isn’t just that they “talk better.”

More importantly, they have started to possess the ability to act.

Traditional ChatBots work in a very simple way: the user asks a question, the model generates text, and it ends.

But Agents are completely different.

An Agent is more like an automatic execution system. The user only provides the goal, and the model is responsible for planning, calling tools, executing tasks, reading results, and iterating continuously.

It’s no longer just about answering questions.

It has begun to truly participate in system execution:

- Reading files

- Executing Shell commands

- Modifying code

- Operating the browser

- Calling local toolchains

- Deciding the next step based on execution results

This is where OpenClaw starts to get interesting.

It allows AI to truly enter the local operating system instead of just staying inside a chat window.

But the danger also starts here.

The real danger isn’t the model itself.

It is:

A large model with system permissions.

Once an Agent can execute commands, the risk level is completely different. Theoretically, it can read local files, access SSH keys, execute arbitrary Shell commands, operate the browser, and even modify system configurations.

So the question shifts: if OpenClaw defaults to having full system permissions, can we shut it into a running environment with clear boundaries?

That is the meaning of a Sandbox.

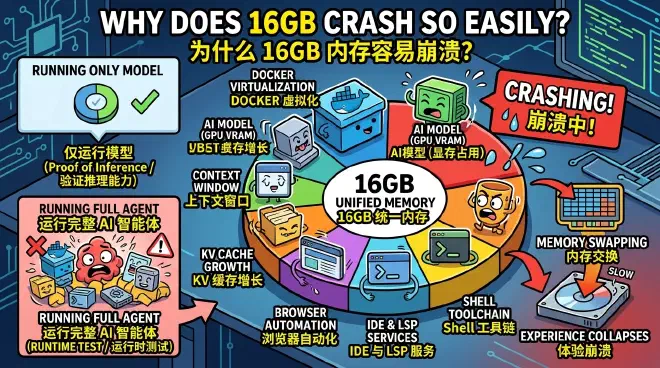

Why Does 16GB Crash So Easily? #

Many people think the bottleneck for local AI is the GPU.

But after actually running an Agent, you’ll find the problem is far more than just inference.

A complete Agent Runtime consumes many resources simultaneously:

- Docker virtualization overhead

- Model video memory occupancy

- Context Window expansion

- KV Cache growth

- Workspace file scanning

- Browser automation

- IDE and LSP services

- Shell toolchain and temporary files

On Apple Silicon’s UMA architecture, the CPU and GPU share the same unified memory.

This means the model, containers, system services, browser, and development tools are all competing for the same 16GB of RAM.

Once swapping starts, the experience collapses quickly.

This is also why many people can run models but cannot run Agents.

Running a model only proves that the inference chain can work.

Running an Agent tests the entire Runtime.

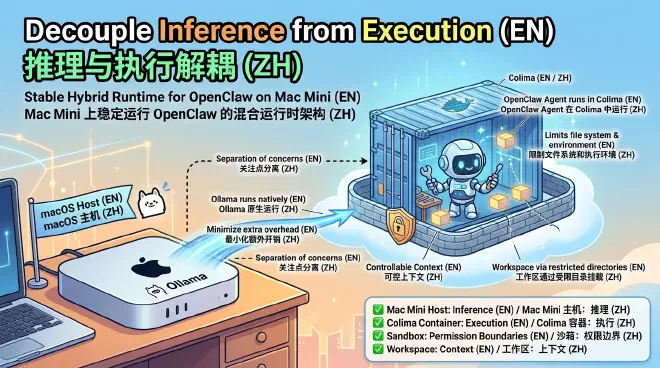

What This Sandbox Does #

To make the 16GB Mac Mini run OpenClaw more stably, I finally adopted a hybrid Runtime architecture:

- Ollama runs natively on the macOS host

- OpenClaw Agent runs in a Colima container

- Colima uses

vzas the virtualization backend - File mounting uses

virtiofs - Workspace is mounted into the container via restricted directories

- The Agent can only access explicitly exposed work directories

- Inference and execution are separated into two different boundaries

The core idea is just one sentence:

Decouple inference from execution.

Keep Ollama on the host to minimize extra overhead for running the model.

Put OpenClaw into a container to limit the file system and execution environment it can see.

By doing this, the system is no longer a structure where “everything is squeezed into Docker Desktop,” but instead becomes:

- The host is responsible for model inference

- The container is responsible for Agent execution

- The Sandbox is responsible for permission boundaries

- The Workspace is responsible for controllable context

It’s not a perfect solution, but it’s much clearer than the default of running the Agent directly with full system permissions.

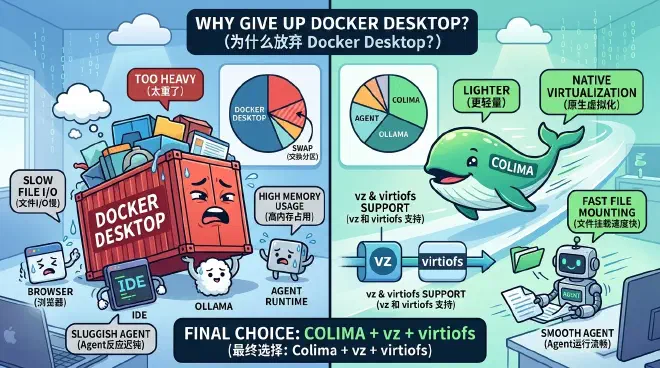

Why Give Up Docker Desktop? #

Because it’s too heavy.

Especially on the 16GB UMA architecture, Docker Desktop itself takes up a significant amount of memory for a long time.

Adding the browser, IDE, Ollama, and Agent Runtime, the system easily enters swap.

Later, I gradually found that for Apple Silicon, Colima is more suitable for this local Agent scenario.

The reasons are not complicated:

- Lighter

- Closer to macOS native virtualization

- Can use

vz - Can cooperate with

virtiofsto improve file mounting performance

This is crucial for an Agent.

Because the biggest bottleneck for a local Agent is often not the GPU, but the file system I/O.

The Agent frequently scans the Workspace, listens for file changes, reads and writes code, generates temporary files, executes Shell commands, and calls various toolchains.

If the file mounting speed is too slow, the entire Agent will appear very sluggish.

The benefits of virtiofs here are obvious: the file system response is more natural, and the sense of waiting for tool calls is much less.

So the final choice is:

Colima + vz + virtiofs

Instead of Docker Desktop.

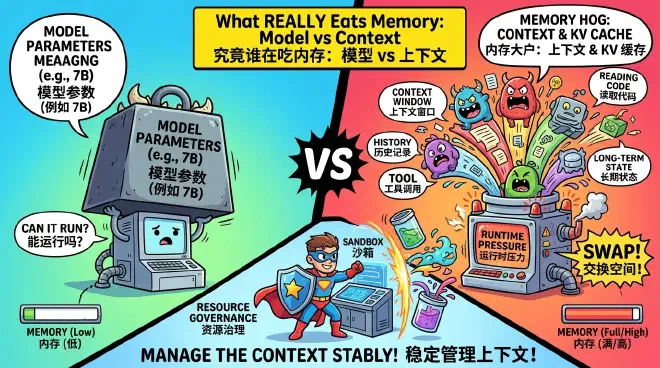

What Really Eats Memory is Often Not the Model #

Another long-underestimated issue is the Context Window.

Many people focus on: “Can 7B run?”

But what truly kills the machine is sometimes not the model parameters, but the context.

Because the Agent constantly reads code, accumulates history, generates Tool Calls, writes to Memory, and maintains long-term state.

All these contents push up the pressure on the Context and KV Cache.

Sometimes, a 4B model with a very long Context is even more likely to pull the machine into swap than a 7B model using a shorter Context.

So the core question for a local Agent is no longer: “Can the model run?”

But: “Can the Runtime manage the context stably?”

This is why a Sandbox is not just a security facility.

It is also a resource governance facility.

When the Workspace, file system, execution environment, and context entry point are all bounded, the Agent’s behavior becomes more controllable, and resource consumption is easier to understand and adjust.

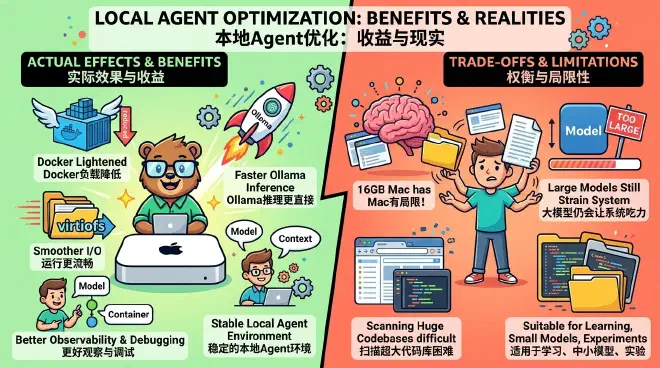

Actual Effects and Trade-offs #

The goal of this scheme is not to turn a 16GB Mac Mini into a high-end workstation.

It solves another problem:

Under limited memory, make the local Agent as stable, observable, and debuggable as possible.

In actual use, several changes are quite noticeable:

- The permanent burden brought by Docker Desktop is reduced.

- After Ollama runs natively, the inference chain is more direct.

- Workspace I/O is smoother under Colima +

virtiofs. - The Agent’s file access boundary is clearer.

- When problems occur, it’s easier to judge whether it’s caused by the model, Context, container, or toolchain.

Of course, it has trade-offs.

16GB is still not suitable for unrestrictedly opening large models, long contexts, multiple browsers, and complex IDE projects.

If the model is too large, the context too long, or the Agent scans an excessively large code repository all at once, the system will still be strained.

So this scheme is more suitable for:

- Learning Agent Runtime

- Running small and medium model experiments

- Testing Tool Calling

- Observing local execution chains

- Building a controllable personal Agent environment

It’s not magic.

It just breaks the chaotic resource competition into several easier-to-understand boundaries.

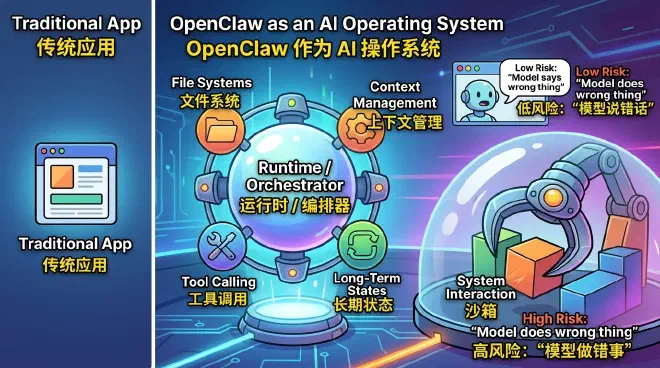

OpenClaw Doesn’t Look Much Like a Traditional App Anymore #

This was the strongest feeling throughout the project.

OpenClaw is essentially no longer like a traditional application.

It is more like:

- A Runtime

- An Orchestrator

- An AI Shell

- A user-mode scheduling layer

It has begun to have file systems, long-term states, Tool Calling, Context management, and system interaction capabilities.

In a sense, AI Agents are gradually growing into a type of user-mode operating system.

This is why Sandboxes will become increasingly important.

In the future, what is truly dangerous may no longer be: “The model said the wrong thing.”

But:

The model did the wrong thing.

This is a completely different risk level.

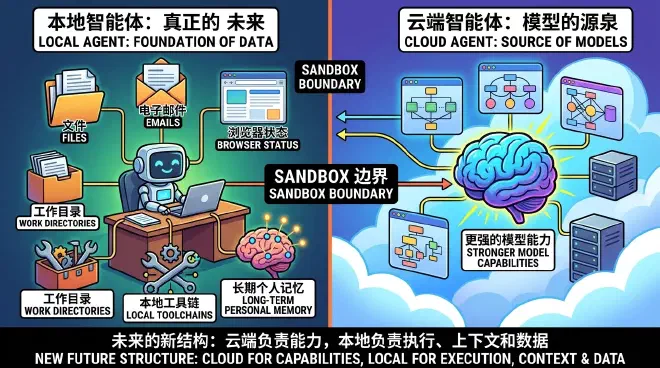

Local Agents Might Be the Real Future #

Although many Agents currently run in the cloud, in the long run, I feel more and more that:

Truly powerful personal Agents will definitely return to the local level.

Because truly valuable information is originally local:

- Files

- Emails

- Browser status

- Work directories

- Local toolchains

- Long-term personal memory

Cloud Agents naturally find it hard to have these contexts.

But local Agents possess them naturally.

In the future, a new structure will likely form:

- The cloud is responsible for stronger model capabilities.

- The local level is responsible for execution, context, and personal data.

And the Sandbox will become one of the most important boundaries between the two.

Sandbox Cannot Completely Solve the Problem #

This project cannot completely solve the security issues of AI Agents.

But it at least provides an important direction:

Do not let AI have the entire system by default.

Future AI systems should be more like modern Apps:

- Minimum permissions

- Isolatable

- Auditable

- Revocable

Instead of an unrestricted super process.

OpenClaw is very interesting because it allows many developers to truly see for the first time:

AI is starting to enter the operating system from the chat window.

And when AI truly starts to operate the system, Sandboxes will likely become one of the most important infrastructures in the next stage.

If you are also using a 16GB Mac to run local Agents, you can start modifying directly from this Sandbox configuration.

Project Address: OpenClaw Sandbox macOS