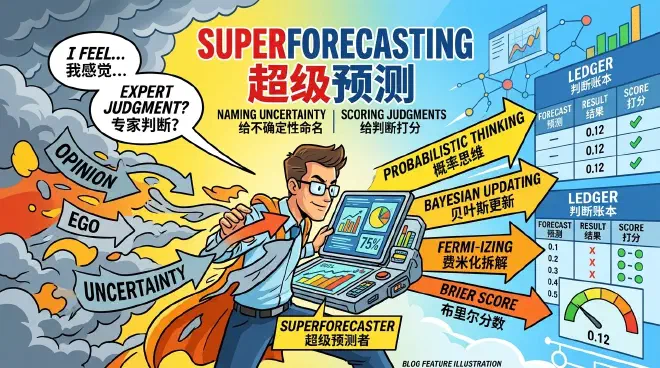

Superforecasting: Naming Uncertainty and Scoring Your Judgments

Table of Contents

In the previous lecture, we discussed “Reference Class Forecasting.” The core principle is to switch your prediction from an “inside view” to an “outside view” — don’t always think your situation is unique; instead, look at how similar projects have fared. Reference class forecasting is a significant correction to wishful thinking, but it remains somewhat coarse.

If I asked you in January 2026 whether the US would attack Iran within three months, how would you make that prediction?

You could examine the reference class—how many similar crises in history actually ended in conflict. But what defines “similar”? Oil prices, election cycles, ally attitudes, regional proxy movements, and the president’s risk appetite are all crucial factors. Which category do you choose? You might find that the specific details of the event are vital, such as the rhythm of US military deployment in the Middle East or whether the negotiation window has truly closed. How do you integrate all these considerations into your forecast?

Surprisingly, until recently, even experts didn’t know how to handle such predictions. Over the past decade, people have transformed future-casting from a mystical craft into a rigorous engineering discipline.

This tool is called “Superforecasting.” While it’s a technical skill, everyone can benefit from its underlying principles.

Foxes vs. Hedgehogs: Cognitive Styles in Prediction #

Our protagonist is Philip E. Tetlock, a professor of psychology and political science at the University of Pennsylvania.

Tetlock achieved something remarkable early on. Over twenty years, he tracked 284 experts who commented on political and economic trends, collecting over 80,000 predictions. He found that they weren’t as good at predicting as one might imagine. Their accuracy was often worse than simple statistical algorithms, and sometimes even worse than a chimpanzee throwing darts.

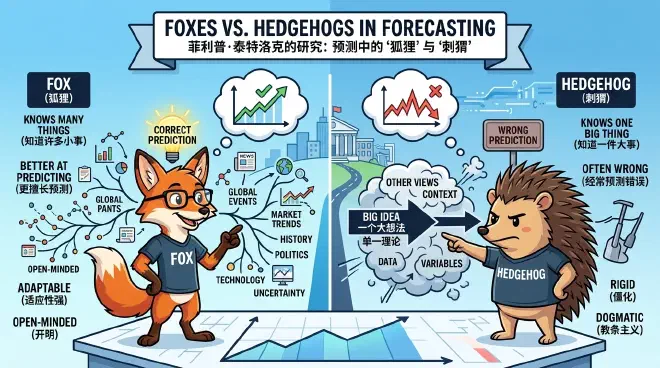

In his 2005 book Expert Political Judgment [1], Tetlock borrowed Isaiah Berlin’s metaphor of the “fox and the hedgehog” to classify experts. “Hedgehogs” know one big thing and use a single grand theory to explain everything, often falling into wishful thinking. “Foxes” know many small things, accept complexity, consider multiple clues, and adjust their views—leading to much better forecasts.

Overall, however, expert accuracy was low. Those who talk daily on media about national affairs are often not very good at predicting.

This is easy to understand. Before the US captured Venezuelan President Maduro or killed Soleimani in Iran, how many Chinese experts swore that the US wouldn’t dare? Now, they won’t admit their failures.

Experts carry heavy burdens. People seek emotional value from predictions, viewing them as choosing sides. If you say Iran suffered heavy losses, some assume you want Iran to suffer. Experts also defend their theories. If you argue a certain diplomatic strategy must fail, you want it to fail in every instance. Prediction becomes an expression of opinion or a performance for a base.

People also bring internal vulnerabilities into forecasting. After a terrorist attack, both experts and the public drastically overestimate the probability of similar future attacks, falling into the availability heuristic.

Essentially, a prediction often reflects the forecaster more than the event itself.

The Birth of Superforecasting: From Craft to Engineering #

But true forecasting is not about making a statement; it’s about “settling the accounts.”

To forecast, you must set aside position, ego, conformity, and the need for security.

Previously, methods like “Red Teaming” (where someone deliberately challenges your assumptions) or “Pre-mortems” (imagining a project has failed and working backward to find the cause) were used. Daniel Kahneman advocated for “Decision Hygiene”—standardizing meeting processes and ensuring independent judgments to reduce noise [2].

Yet, compared to Superforecasting, these methods are quite vague.

“Superforecasting” sounds flamboyant and is even a registered trademark, but it is a serious academic concept [3] representing a methodology.

It started in 2003 when US intelligence agencies claimed Iraq had weapons of mass destruction. Bush went to war, only to find nothing.

This was a profound humiliation for the intelligence community. To redeem themselves, IARPA launched a national forecasting tournament in 2011 [4]. It lasted four years, involving thousands of participants and over 500 real-world questions.

Tetlock entered with the “Good Judgment Project,” composed of amateurs—programmers, pharmacists, even retirees. With only minimal training, his team won by a huge margin. By the second year, they were so far ahead that most other teams were eliminated. Their predictions were even more accurate than intelligence analysts with access to classified data.

Tetlock detailed this approach in his book Superforecasting [5]. Let’s see how it’s done.

Fermi-izing: Decomposing Intuition into Operable Probabilities #

The basic idea boils down to two things: Probabilization and Testability.

Probabilization means expressing every prediction as a percentage. Don’t say “The US will likely get tougher on Iran”; say “The probability of a US military strike on Iranian targets by [Date] is X%.” The former is a feeling; the latter is a forecast that can be settled.

Probabilization involves three steps:

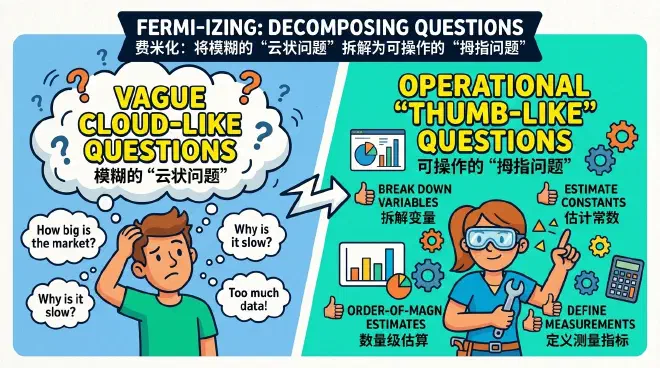

First, decompose vague “cloud-like” questions into operational “thumb-like” questions. This is called “Fermi-izing,” named after physicist Enrico Fermi.

If a leader asks, “Will the US and China decouple?”, that’s a cloud question. What does “decouple” even mean? Fermi-izing breaks it down: “What is the probability that a specific tariff bill passes in six months?” or “What is the probability that trade in a core technology drops by over 10%?” The big question’s probability is a synthesis of these small ones.

Second, start with an outside view and adjust with an inside view.

Look at the “reference class”—how similar situations in history ended. This gives you a base rate. Then, look at the specific details of the current event and adjust your probability based on its unique characteristics.

Bayesian Updating: Dynamically Refining Your Judgments #

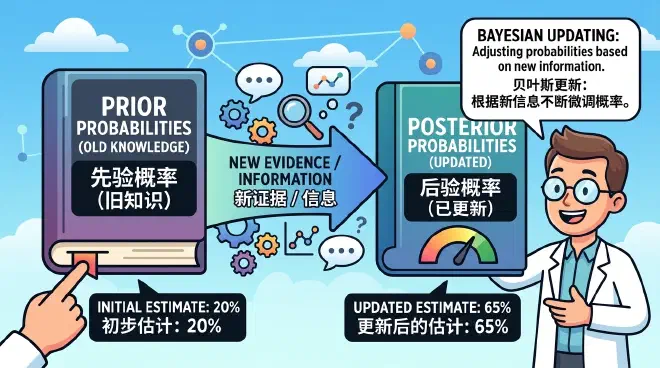

Third, use Bayesian updating.

We’ve discussed this before. The previous probability is your “prior.” As new information emerges, you adjust. A positive news report might move your probability from 43% to 46%; a negative one might move it back to 41%. You aren’t ignoring news, nor are you overreacting to it; you are steady.

On the deadline, you submit your final answer. But you must then face the test.

Testing looks at your long-term performance across a portfolio of predictions. It measures “Calibration” (is your 70% probability right 70% of the time?) and “Resolution” (do you dare to give high or low probabilities rather than just sticking to 50%?).

A key metric is the “Brier score” [6], which measures the squared error between your predicted probability and the actual outcome [7]. It forces you to be precise: if an event doesn’t happen, a 27% prediction is punished more than a 25% one and less than a 30% one.

Probability and testing create a perfect feedback loop. Without probability, there is no calibration; without scoring, there is no learning. It forces you to shed ambiguity and ego.

Practical Application: Fermi-izing Your “Happiness” #

Let’s try an example. Suppose you want to move from Beijing to Guangzhou and are anxious about your future happiness.

First, Fermi-ize “happiness” into four dimensions: work, housing, relationships, and lifestyle. For work, the question is: “What is the probability I find a job with at least 80% of my current salary within 3 months?”

Check the reference class: average job-seeking periods and salaries in Guangzhou. Suppose the base rate is 40%. Then apply the inside view: you have core patents and referrals. Adjust the probability to 65%.

You send out resumes. A week later, you have two interviews. Update the probability to 74%.

Repeat for other dimensions. If three out of four have over a 60% probability, you’re likely safe. Your decision is no longer a personality statement or a gamble; it’s an operational project.

Then, score yourself. After moving, compare the results with your predictions. Were you too optimistic? Refinement will make you a better forecaster. Research shows that even short-term training with scoring significantly improves accuracy [8].

The Present of Superforecasting: AI Collaboration and Trends #

Tetlock’s “Good Judgment Project” is now a service (goodjudgment.com).

In predicting Fed interest rate adjustments from 2023 to 2025, their superforecasters consistently beat market pricing, with a significantly better Brier score [9]. In 2025, GiveWell even hired them to forecast foreign aid and global health funding [10].

They’ve also had failures, such as the Russia-Ukraine war. The project admitted in a post-mortem [11] that between Jan 21 and Feb 10, 2022, they kept the probability of a full invasion below 50%—yet it happened. They over-relied on historical reference classes for a rare event and underestimated Putin’s risk tolerance and the accuracy of US intelligence.

By 2025, Tetlock combined Superforecasting with AI. While standalone LLMs are currently less accurate than human superforecasters [12], human-AI collaboration improved accuracy by 24% to 28% [13].

For now, don’t leave forecasting entirely to AI. AI is great at searching, but humans are better at defining boundaries, choosing reference classes, and spotting outliers. However, AI can help strip ego from judgment.

Conclusion: The Engineering Revolution of Social Science #

My greatest takeaway is the “engineering” of social science.

Early social science was like the humanities—focused on narratives and post-hoc explanations. Later, it became “scientific” with data and models—but often limited to single issues.

Reality is multi-factorial. One expert with one model isn’t enough. Superforecasting uses an engineering approach to integrate multiple factors into a trackable, scorable workflow.

Interestingly, it doesn’t use complex models! Being loyal to “reference classes” and “Bayesian updates” already gets you far. Models are for explanation and intervention; prediction is simpler.

Forecasting is a major business. Perhaps “Forecasting Engineering” should be a university major. The world might not need many thinkers, but it needs many “parameter tuners.”

If you’ve been misled by expert predictions, ask yourself: do you want a statement of faith, or a forecast? Do you want emotional value, or decision value?

The most magical step is Fermi-izing. Psychiatrist Dan Siegel said, “Name it to tame it”—by naming an emotion, you can tame it. Similarly, once uncertainty is decomposed and named as a probability, it transforms from a terrifying emotion into a solvable engineering problem.

Don’t say “I’m mostly ready” or “Users should like it.” Say “The probability of scoring over 120 in math next time is 43%.”

Forecasting is not an extension of your opinion; it’s a craft of self-calibration. You aren’t seeking an oracle; you are training your judgment ledger.

Maybe start an Excel sheet today. Write your anxiety on the left (“Client will cancel tomorrow”) and the probability on the right (“35%”). Then, check back and settle the account.

Translate uncertainty into a scale,

Leave your ego at the door.

Don’t argue “I told you so,”

Ask “What did I report then?”

Those who can correct themselves time and again,

Are those who get closer to the truth time and again.

Notes #

[1] Tetlock, Philip E. Expert Political Judgment: How Good Is It? How Can We Know? Princeton, NJ: Princeton University Press, 2005.

[2] Elite Daily Lessons Season 4, Noise 7: Collective Decision-Making Must Practice Hygiene.

[3] Mellers, Barbara A., et al. “Identifying and Cultivating Superforecasters as a Method of Improving Probabilistic Predictions.” Perspectives on Psychological Science 10, no. 3 (2015): 267–281.

[4] IARPA. “ACE: Aggregative Contingent Estimation.” Accessed March 21, 2026.

[5] Tetlock, Philip E., and Dan Gardner. Superforecasting: The Art and Science of Prediction. New York: Crown, 2015.

[6] Brier, Glenn W. “Verification of Forecasts Expressed in Terms of Probability.” Monthly Weather Review 78, no. 1 (1950): 1–3.

[7] For those interested: fi is the forecast probability (0-1), oi is the actual outcome (1 or 0). Brier scores range from 0 to 2 (or 0-1 for binary events); lower is more accurate, with 0 being a perfect forecast.

[8] Chang, Welton, et al. “Developing Expert Political Judgment: The Impact of Training and Practice on Judgmental Accuracy in Geopolitical Forecasting Tournaments.” Judgment and Decision Making 11, no. 5 (2016): 509–526.

[9] Good Judgment Inc. “Superforecasters Continue to Beat the Market.” 2025.

[10] GiveWell. “Good Judgment Inc. — Forecasts on U.S. Foreign Aid Funding.” 2025.

[11] Good Judgment Inc. Post-Mortem: Lessons Learned in Superforecasting the Russian Invasion of Ukraine. New York: Good Judgment Inc., 2022.

[12] Karger, Ezra, et al. “ForecastBench: A Dynamic Benchmark of AI Forecasting Capabilities.” ICLR 2025.

[13] Schoenegger, Philipp, et al. “AI-Augmented Predictions: LLM Assistants Improve Human Forecasting Accuracy.” ACM Transactions on Interactive Intelligent Systems 15, no. 1 (2025).