Deep Decoding: How Large Language Models 'See' Structure?

Table of Contents

From Native Multimodality to MoE: A Leap in Efficiency #

Preface: In corporate practice, we often view Large Language Models (LLMs) as “super brains” for processing information. However, when faced with misaligned Excel spreadsheets, complex nested code, or bilingual technical documents, the differences in stability across models fundamentally stem from how they understand “structure” at the architectural level.

For enterprise managers and engineers, understanding these architectural differences is key to determining the efficiency and precision of AI collaboration workflows.

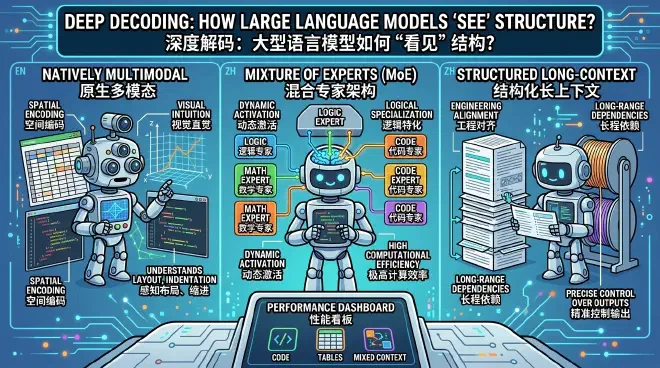

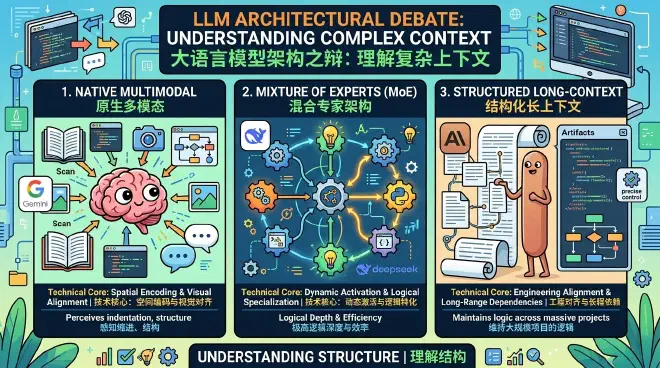

I. The Architectural Debate: How Do Models Understand Complex Context? #

Current top-tier models employ three distinct technical paths when processing multimodal and mixed-language inputs:

1. Natively Multimodal Architecture #

- Technical Core: Spatial encoding and visual alignment (e.g., Google Gemini, GPT-4o).

- Characteristics: Integration of vision and language modules within a single framework, sharing underlying attention weights.

- Advantages: Possesses “visual intuition.” It doesn’t just read text; it perceives indentation, line breaks, and physical spacing. When facing chaotic formats, it understands the topological structure of space through “scanning,” much like a human.

2. Mixture of Experts (MoE) Architecture #

- Technical Core: Dynamic activation and logical specialization (e.g., DeepSeek).

- Characteristics: Employs a sparse activation mechanism, dynamically calling specific expert neuron modules based on task attributes.

- Advantages: Extreme logical depth. When handling high-difficulty symbolic logic such as algorithmic refactoring, it maintains logical consistency with extremely high computational efficiency.

3. Structured Long-Context Architecture #

- Technical Core: Engineering alignment and long-range dependencies (e.g., Claude series).

- Characteristics: Focuses on utilizing ultra-long context windows to maintain logical alignment across large-scale projects.

- Advantages: Excels at “engineered alignment.” Through features like Artifacts, it demonstrates precise control over structured outputs like code and flowcharts.

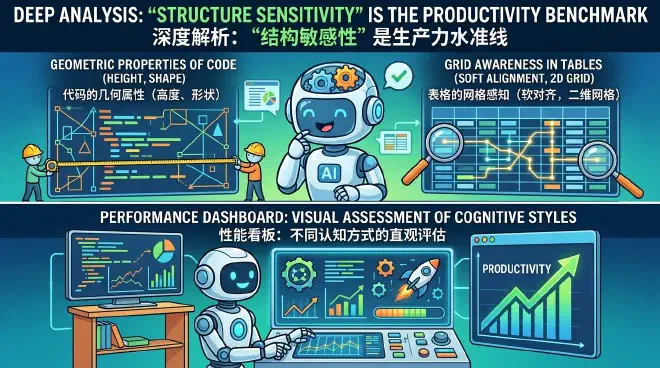

II. Deep Analysis: Why is “Structure Sensitivity” the Productivity Benchmark? #

Enterprise data often contains a vast amount of irregular information; the essence of information lies not just in “words,” but in “layout.”

- Geometric Properties of Code: Advanced models no longer treat code as a linear stream of characters but understand it as geometric symbols with “height” (indentation) and “shape” (brackets). This allows the model to naturally capture the sense of enclosure in code blocks.

- Grid Awareness in Tables: When dealing with misaligned data, multimodal models use a “Soft Alignment” mechanism. They treat tables as 2D grid images, capturing the horizontal and vertical geometric relationships between cells to avoid logical breaks caused by character misalignment.

III. Performance Dashboard: A Visual Assessment of Cognitive Styles #

| Evaluation Dimension | Mixture of Experts (MoE) | Structured Long-Context | Native Multimodal |

|---|---|---|---|

| Typical Representative | DeepSeek | Claude | Gemini / GPT-4o |

| Code Understanding | Strong symbolic logic reasoning | Clear logical boundaries, excellent at referencing | Spatial visual feature sensing |

| Tables/Documents | Textual logical pattern alignment | Excels at complex cross-document relations | 2D spatial feature encoding |

| Mixed Context | Optimized tokenizer, seamless transition | Extremely high instruction following | Captures physical proximity semantics |

IV. Decision Recommendations: How to Better Use LLMs to Assist Your Work? #

1. For Developers and Engineers: “Choose Tools” Based on Task Logic #

- Algorithm Refactoring and Logic-Intensive Tasks: Prioritize MoE architecture models, as their dynamic expert mechanism offers depth advantages in handling high-difficulty algorithms and pure logical reasoning.

- Large-Scale Engineering Audits and System Analysis: Prioritize long-context architecture models, leveraging their mastery over long-range logic for full-repository analysis.

- Handling Corrupted Code or Document Formats: Prioritize natively multimodal models. Directly uploading code screenshots or original PDFs often yields better results than pure text input, as the model can use visual encoding to reconstruct damaged hierarchies.

2. Special Reminder: Security, Compliance, and Confidentiality (Must Read for Developers) #

While enjoying the efficiency dividends of AI, one must hold the line on corporate security:

- Data Masking: Strictly prohibit uploading commercial secrets, customer private data, or undisclosed core source code to public AI platforms.

- Prioritize Local Deployment: For highly sensitive code audits or patent technology analysis, prioritize using internally deployed model instances.

- Disable Training Feedback: When using commercial AI tools, ensure you have disabled the option to “allow input data to be used for model training improvement.”

3. For Enterprise Managers: Building a “Heterogeneous Agent” Collaboration System #

Establish Heterogeneous Clusters: Avoid using a single model for all tasks. Distribute tasks based on their modality—for example, assign PDF parsing to native multimodal models and business reviews to long-context models.

Promote “Structure-First” Prompt Engineering:

- Explicit Structuring: Clearly specify the meaning, boundaries, and expected types of data columns in your instructions.

- Two-Step Strategy: Guide the AI to “reconstruct the structure first, then perform subsequent analysis.”

- Utilize Multimodal Input: Encourage teams to leverage the multimodal capabilities of models by inputting original files to reduce information loss during text conversion.

Summary: The evolution of large models is transitioning from “reading words” to “perceiving layout.” Mastering architectural differences while strictly observing data security boundaries will allow AI to truly evolve into a digital architect that understands complex engineering environments. This precise and secure collaboration will bring substantial competitiveness to your AI transformation.