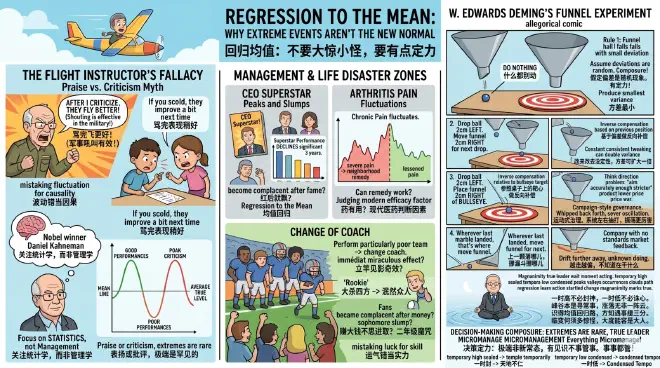

Regression to the Mean: Why Extreme Events Aren't the New Normal

Daniel Kahneman, the Nobel laureate in economics and one of the founding fathers of decision science, shared a compelling story in his book Thinking, Fast and Slow [1].

Kahneman was once conducting a training session for the Israeli Air Force. He presented a viewpoint well-known in psychology: rewarding good performance is far more effective than punishing mistakes. There is a vast amount of research supporting this [2], whether you are educating children, training athletes, or even teaching animals in a circus; positive reinforcement should be the primary approach.

Unexpectedly, an old flight instructor in the audience stood up to object. He said, “I’ve trained countless pilots. Every time a student performs an exceptionally beautiful flight maneuver and I praise him, his next performance is almost guaranteed to be worse. But every time someone flies like dog shit and I tear into him, he usually flies better the next time! Don’t talk to me about psychology; in the military, shouting is what works!”

Kahneman was momentarily speechless. Could so many studies be wrong?

If you are a parent, you might relate: when your child does something wrong and you scold them, they often improve a bit next time; but if they get an exceptionally high score on a test and you heap praise on them, their next result often isn’t as good. Could it be that “spare the rod, spoil the child” is actually the truth?

Kahneman later realized that while the old instructor’s observation was correct, his explanation was wrong.

It wasn’t management; it was statistics. It wasn’t that criticism was effective; it was “Regression to the Mean.” The logic is that exceptionally good or bad performances are extreme cases and are rare. Therefore, the next performance will naturally be less extreme. Even without criticism or praise, it will move closer to the average.

The “Selection Effect” we discussed in the previous session occurs when you draw wrong causal conclusions because you haven’t seen all the data. “Regression to the Mean,” however, is when you mistake normal fluctuations in data for causality. It causes you to overreact to extreme events.

✵

The phenomenon of regression to the mean was first proposed in 1886 by Francis Galton, a British scientist and cousin of Charles Darwin [3]. At the time, he was studying the inheritance of height and found that children of tall parents were, on average, not as tall as their parents, and children of short parents were, on average, not as short. Did God love fairness so much that he specifically pulled extremes toward the center?

Galton spent over a decade figuring it out, and the logic is actually quite simple. Most things involve a certain element of luck. It can be said that:

Your observed result = True level of ability + Random luck.

If someone performs “extremely well” in a single test, it means they not only have a certain level of skill but also happened to encounter “extremely good luck” that day. However, the probability of having such good luck again is very small. So, in the next test, even if their ability hasn’t changed at all, that good luck is unlikely to reappear. Thus, their next performance is almost destined to be worse than the current one.

The reverse is also true. A botched performance isn’t just a lack of ability; it’s also extremely bad luck. The probability of constantly encountering bad luck is very low, so the next time luck won’t be as bad, and performance naturally improves.

It’s as if there is a force pulling their performance back to their “true level.” Of course, there is no such force. Even without the instructor’s praise or criticism, the good won’t stay good forever, and the bad won’t stay bad forever. It’s just normal random fluctuation!

Yet, the human brain loves to attribute causes. Later, Kahneman and his collaborator Amos Tversky summarized [4] that people find it hard to understand regression to the mean and frequently commit two types of errors: mistaking fluctuation for causality and mistaking luck for skill. These can collectively be called the “Regression Fallacy.”

✵

The key is that extreme values are so attractive for us to interpret.

If someone performs so excellently, isn’t it because they are exceptionally powerful?

If someone performs so poorly, isn’t it because there’s something wrong with them?

If someone goes from excellent to mediocre, isn’t it because they became complacent?

If someone goes from terrible to not-so-bad, isn’t it because our corrective measures worked?

Little do we know that all of this is likely just random fluctuation.

But in reality, you often don’t get five chances to see the fluctuation—people often draw conclusions and take action the moment they see an extreme value.

When a CEO achieves breakthrough results, the company gives him a massive bonus, and magazines put him on the cover. Yet, research shows that CEOs who reach such peaks often see a significant decline in performance over the following three years [5]. The board then laments, “Look, this person became arrogant after becoming famous.”

A rookie player dominates the professional league in his first year but becomes mediocre in his second. Experts say it’s the “rookie wall” and that he needs to reflect and change his playing style. Fans say he became complacent after making big money—the “sophomore slump.”

In reality, they are just regressing to the mean. Whether the board gives a reward, the rookie changes his style, or he makes money, a superstar’s performance the following year is unlikely to be as good as the year they blew up.

Old Zhang has arthritis. Usually, his knees hurt, but one day the pain was particularly severe. A neighbor gave him an ancestral remedy. After drinking it, the pain indeed lessened significantly. Can you say the remedy worked? You must realize that chronic pain naturally fluctuates. If you take action at the lowest point, any treatment will appear effective. In fact, regression to the mean is a very serious confounding factor in judging efficacy in modern medicine [6].

There has always been a legend in the world of football: if a team is performing particularly poorly, just changing the coach will usually have an immediate miraculous effect… that sounds a lot like regression to the mean.

It’s just like an emperor hearing of a natural disaster and issuing a “Decree of Self-Reproach,” after which no further disasters occur. Can you say the decree was effective?

✵

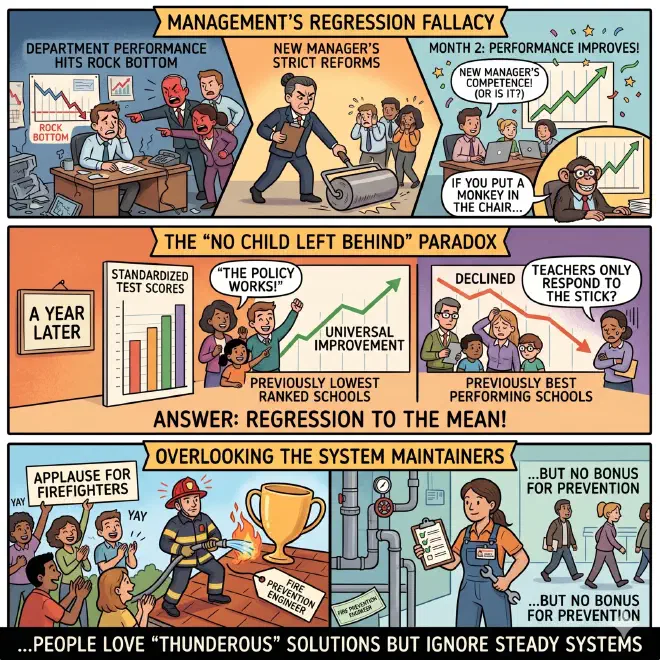

Management is a disaster zone for the regression fallacy.

A department’s performance hits rock bottom, the higher-ups are furious, so they fire the original manager and bring in a new one. The new manager implements a series of strict reforms. In the second month, performance improves! In such a case, who could say it wasn’t the new manager’s competence that saved the day?

But the truth might simply be that the performance experienced a random fluctuation. If you put a monkey in the manager’s chair, it would also have bounced back the next month.

During the George W. Bush era, the US launched a major public education reform called “No Child Left Behind.” The policy’s logic was to reward or punish schools and teachers based on standardized test scores: if a school’s scores improved, they got bonuses; if they declined, funding was cut or the school might even be closed.

A year later, the schools that had ranked the lowest the previous year showed universal improvement. Some cheered, “Look, the policy works! You can’t do without performance metrics; even teachers can’t just rely on passion!” The problem was that if you looked at the schools that had performed best the previous year, their scores had actually declined. Was it that teachers only respond to the stick and not the carrot? The answer was regression to the mean [7].

But people love immediate, sweeping crackdowns—preferably “thunderous” campaign-style governance.

Meanwhile, those who quietly maintain the system at the top are often overlooked. We give applause to firefighters but don’t give bonuses to fire prevention engineers.

✵

Aggressive management based on the regression fallacy is not only unhelpful but often harmful.

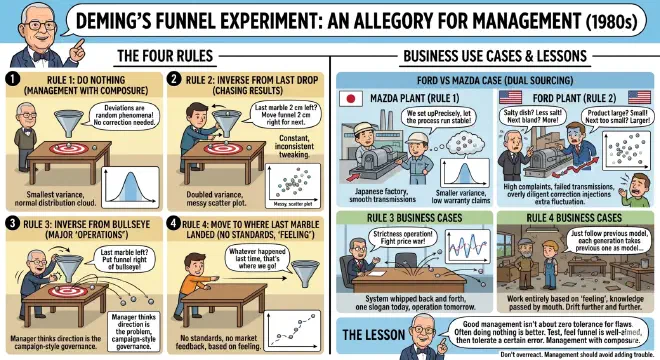

In the 1980s, W. Edwards Deming, the American statistician later known as the father of modern quality management, proposed the “Funnel Experiment” [8], which might be the most beautiful allegory in management.

Imagine you draw a bullseye on a table and place a funnel directly above it, aiming the funnel at the bullseye. You drop marbles one by one through the funnel, aiming to hit the bullseye.

No matter how level you hold the funnel or how accurately you aim, the marbles will inevitably hit the inner wall of the funnel as they fall, causing some deviation in their path. If you see a marble miss the bullseye, what do you do?

Deming envisioned four response rules representing four management methods.

Rule 1 is to do nothing. As long as I believe the funnel is aimed at the bullseye, I can fully assume that the deviations of the marbles are random phenomena and no correction is needed.

This is management with composure! The marble landings will follow a normal distribution around the bullseye, and in fact, this produces the smallest variance.

Rule 2 is to make an inverse compensation based on the deviation from the last funnel position. If a marble falls 2 cm to the left, I move the funnel 2 cm to the right for the next drop.

This is a management style that chases results. A customer says the dish was too salty this time, so I put less salt next time. Someone complains our product’s size is a bit too large, so we make it smaller; next time they say it’s too small, so we make it larger again. This constant, inconsistent tweaking, as computer simulations show, can double the variance.

Rule 3 is to make an inverse compensation relative to the bullseye on the table. If the marble falls 2 cm to the left, I place the funnel 2 cm to the right of the bullseye for the next drop.

If Rule 2 is a manager thinking their company has a problem, Rule 3 is a manager thinking their direction is the problem: “I’m not aiming accurately enough! Our product quality isn’t good; it must be because I’m not strict enough! The competitor lowered their price, so we must lower ours even more—we’ll fight a price war!” The result is campaign-style governance, with one slogan today and a major “operation” tomorrow… the system is whipped back and forth, and the oscillations become more and more severe; it’s even possible for the marbles to fly off the table.

Rule 4 is even more interesting: wherever the last marble landed, that’s where you move the funnel for the next drop.

You might ask, who manages like that? In fact, people do. This is a company with no standards that doesn’t look at market feedback; work is done entirely based on “feeling.” Masters pass knowledge to apprentices by word of mouth, and each generation automatically takes the previous one as its model… as a result, they drift further and further away until they don’t even know what they are doing anymore.

The lesson here is not to overreact to deviations. You can test several times first, and once you feel the funnel is reasonably well-aimed, that’s enough. A certain degree of error is tolerable—otherwise, your management is just adding trouble, and potentially a lot of it.

There was a real-life case at the time [9]. Ford was launching a new model and decided to use dual sourcing. They had their domestic Ford plant and a Mazda plant in Japan use the same set of blueprints to produce identical automatic transmissions. It turned out that cars with Ford-made transmissions had very high complaint and warranty claim rates, while the transmissions produced by the Mazda plant ran very smoothly. Why?

It turned out that Ford’s quality management approach was more like Rule 2 in the funnel experiment: as soon as a dimension deviated slightly from the target, even if it was still within the specified tolerance, they would immediately adjust the machinery. Mazda, on the other hand, was more like Rule 1: they tried to adjust the machinery as well as possible first, and as long as the process remained stable thereafter, they didn’t chase every single data point with random adjustments.

As Deming expected, Ford’s tolerances were much larger than Mazda’s. Overly diligent correction is equivalent to injecting extra fluctuation into the system.

Good management isn’t about having zero tolerance for flaws and fixing every problem the moment you see it. Often, doing nothing is far better than making a fuss.

✵

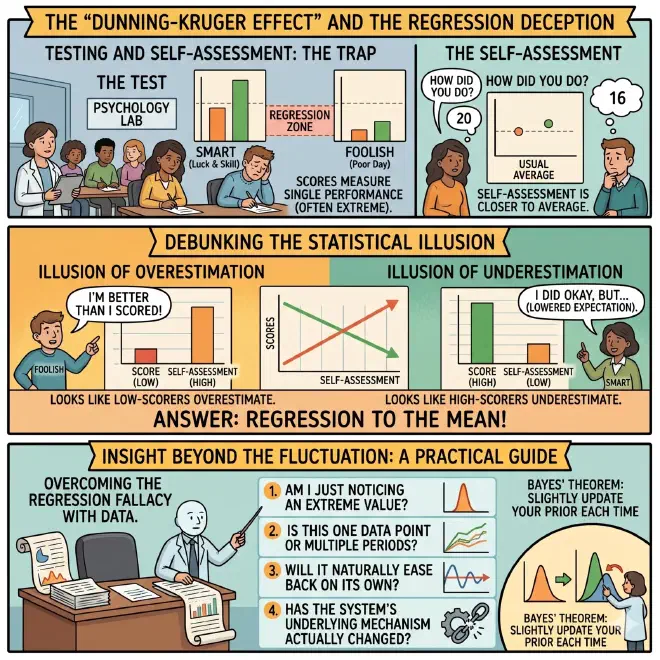

How easily are people fooled by regression to the mean? I made a shocking discovery during my research. The famous “Dunning-Kruger Effect,” to a significant extent, is actually caused by regression to the mean.

We have previously discussed the Dunning-Kruger Effect in our Elite Daily Lesson column [10]. It means that the more foolish a person is, the more likely they are to overestimate themselves, while intelligent people are more humble and tend to underestimate themselves. This pattern sounds intuitive, but since 2020, many in academia have raised doubts [11].

Setting aside technical details, let’s put it simply. Researchers judge whether a subject is “smart” or “foolish” by having them take a set of test questions in a lab. Since it’s a test, some will perform well and others poorly—both exceptionally high and exceptionally low scores involve an element of luck.

But when you ask people to evaluate themselves, their self-assessment will inevitably be closer to their usual average. For those who performed poorly on the day, this average will certainly be higher than their actual performance; for those who performed well, it will be lower than their actual score. Right?

Thus, from your perspective, it looks like low-scorers overestimate themselves and high-scorers underestimate themselves! Little did you know this is just a statistical effect.

Some also believe it’s not entirely a statistical effect and that the Dunning-Kruger Effect does exist, just not as severely as previously estimated [12]… But the point I want to make is that gaining true insight from observations is extremely difficult. Even academia, with all its rigor, doesn’t dare to claim a final conclusion.

So you might ask, if even academic researchers find it so hard to judge the truth, how can I know if what’s in front of me is a real change in trend or just a random fluctuation?

This is the most fundamental question in statistics. There is no simple way other than looking at more and more comprehensive data. Regarding regression to the mean, you should at least ask yourself four questions:

- Am I only noticing this because of an extreme value?

- Am I seeing a single data point or multiple periods of data?

- If I do nothing, will it naturally ease back a bit on its own?

- Has the system’s underlying mechanism actually changed?

The safest approach is to use Bayes’ theorem and slightly update your prior each time.

✵

The most important lesson of this session is decision-making composure.

If a child gets into trouble, there’s no need to get angry; they already feel bad enough. If an employee botches a task, you don’t need to have a “talk” with them or implement reforms; they are unlikely to repeat the mistake next time. If a student finishes last in an exam this time, don’t rush to lecture them about values… similarly, if a boss occasionally pulls off something big, there’s no need to rush to deify them.

A whirlwind does not last all morning, and a sudden downpour does not last all day. Extremes are not the norm, and a person of insight doesn’t micromanage everything.

Those who react to every rumor—thinking the end of the world is coming when the market drops 2%, or that a bull market has begun when it rises 1%—who can’t sit still when they see something unusual, and who rush to give out heavy rewards or punishments in a fit of excitement—such “dramatic” decision-makers can torment a system to death.

As the saying goes—

A temporary high needs no deification; A temporary low needs no condemnation. Peaks and valleys are but common occurrences, Ebbs and flows are no more than passing clouds. Recognizing the path of regression to the mean, One learns to wait a moment before acting. Why be startled by change? Magnanimity is the mark of a true leader.

Notes

[1] Kahneman, Daniel. Thinking, Fast and Slow. New York: Farrar, Straus and Giroux, 2011.

[2] Zoder-Martell, Kimberly A., Margaret T. Floress, Ronan S. Bernas, Brad A. Dufrene, and Samantha L. Foulks. 2019. “Training Teachers to Increase Behavior-Specific Praise: A Meta-Analysis.” Journal of Applied School Psychology 35 (4): 309–338.

[3] Galton, Francis. “Regression towards Mediocrity in Hereditary Stature.” Journal of the Anthropological Institute of Great Britain and Ireland 15 (1886): 246–263. See also Elite Daily Lesson Season 2, “There is Always a Force that Makes Us Regress to the Average.”

[4] Kahneman, Daniel, and Amos Tversky. “On the Psychology of Prediction.” Psychological Review 80, no. 4 (1973): 237–251.

[5] Malmendier, Ulrike, and Geoffrey Tate. 2009. “Superstar CEOs.” The Quarterly Journal of Economics 124 (4): 1593–1638.

[6] Morton, Veronica, and David J. Torgerson. “Effect of Regression to the Mean on Decision Making in Health Care.” BMJ 326, no. 7398 (2003): 1083–1084.

[7] Smith, Gary, and Joanna Smith. “Regression to the Mean in Average Test Scores.” Educational Assessment 10, no. 4 (2005): 377–399.

[8] Deming, W. Edwards. Out of the Crisis. Cambridge, MA: MIT Press, 1986.

[9] Bellows, Bill. 2016. “Specification-based Management Is Not Sufficient.” The W. Edwards Deming Institute.

[10] Elite Daily Lesson Season 3, “Progress Makes People Humble, Falling Behind Makes People Proud.”

[11] Gignac, Gilles E., and Marcin Zajenkowski. “The Dunning-Kruger Effect Is (Mostly) a Statistical Artefact: Valid Approaches to Testing the Hypothesis with Individual Differences Data.” Intelligence 80 (2020): 101449; Magnus, Jan R., and Anatoly A. Peresetsky. “A Statistical Explanation of the Dunning-Kruger Effect.” Frontiers in Psychology 13 (2022): 840180.

[12] Jansen, Rachel A., Anna N. Rafferty, and Thomas L. Griffiths. “A Rational Model of the Dunning-Kruger Effect Supports Insensitivity to Evidence in Low Performers.” Nature Human Behaviour 5, no. 6 (2021): 756–763.