Breaking the Million-Token Barrier: 5 Impactful Takeaways from DeepSeek-V4

Table of Contents

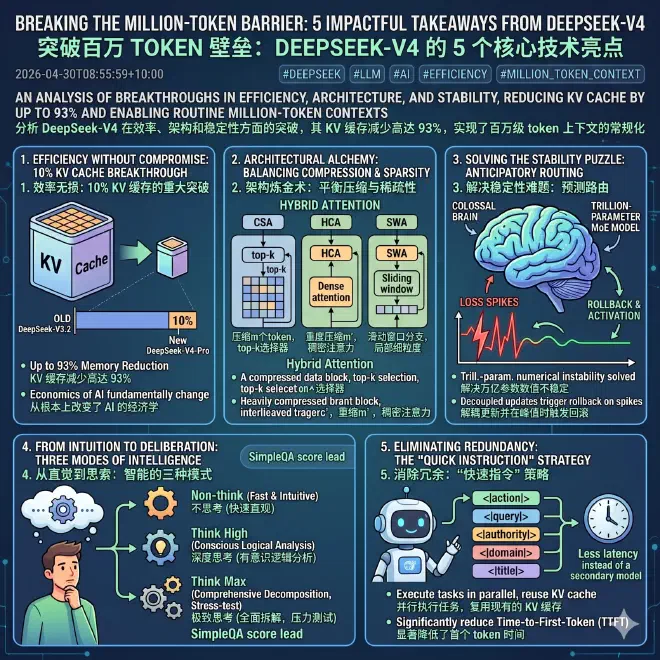

For years, Large Language Models (LLMs) have been haunted by a fundamental mathematical constraint: quadratic computational complexity. As a conversation grows longer or a document becomes more massive, the resources required to process that information don’t just grow—they explode. This "quadratic wall" is why most models eventually lose their "memory," becoming sluggish or incoherent when tasked with analyzing thousands of lines of code or maintaining complex, multi-day workflows.

DeepSeek-V4 arrives as a targeted strike against this efficiency barrier. Comprising two primary architectures—DeepSeek-V4-Pro (a 1.6-trillion-parameter MoE model with 49 billion activated per token) and DeepSeek-V4-Flash (a 284-billion-parameter model with 13 billion activated)—this series is designed to make the one-million-token context window a routine standard rather than a computational luxury. Trained on a massive scale of 32 trillion tokens (Flash) and 33 trillion tokens (Pro), these models represent a foundational shift in how we scale intelligence.

1. Efficiency Without Compromise: The 10% KV Cache Breakthrough #

In the world of AI scaling, larger context windows usually imply an exponential drain on resources. DeepSeek-V4 flips this narrative through a staggering reduction in the "Key-Value (KV) cache"—the memory used to store the context of a conversation.

According to technical data, in a one-million-token context, DeepSeek-V4-Pro requires only 27% of the single-token inference FLOPs (measured in equivalent FP8 FLOPs) and a mere 10% of the KV cache compared to its predecessor, DeepSeek-V3.2. For the more compact Flash model, the numbers are even more disruptive, requiring only 7% of the KV cache relative to the previous generation.

This efficiency is counter-intuitive; typically, maintaining a million tokens of "active memory" requires massive, expensive hardware clusters. By reducing the memory footprint by up to 93%, DeepSeek-V4 fundamentally changes the economics of AI. This isn’t just about saving money; it is the difference between an AI that can "read" a codebase and one that can "live" in it as a persistent agent. As the researchers note:

"This enables us to routinely support one-million-token contexts, thereby making long-horizon tasks and further test-time scaling more feasible… ushering in a new era of million-length contexts."

2. Architectural Alchemy: Balancing Compression and Sparsity #

The secret to this efficiency lies in a "hybrid attention" architecture that uses three distinct pillars to process information. Rather than treating every token with equal weight, DeepSeek-V4 interleaves its design to ensure causality and context remain intact:

- Compressed Sparse Attention (CSA): This mechanism compresses the KV cache of every m tokens into a single entry. It then applies a "top-k" selector, meaning the model only attends to the most relevant compressed entries rather than the entire history.

- Heavily Compressed Attention (HCA): This pillar uses a much more aggressive compression rate (m’) but maintains "dense" attention, ensuring the model doesn’t lose the broad, high-level context of the document.

- Sliding Window Attention (SWA): To prevent the model from breaking causality or losing local fine-grained dependencies, a supplementary SWA branch is used. This ensures the model always has a clear view of the most recent tokens.

By interleaving these approaches, the model balances fine-grained local dependencies with massive cross-document analysis. This design is particularly superior for complex agentic workflows where an AI must remember specific technical details from a massive file while following a broad, long-term plan.

3. Solving the Stability Puzzle: Anticipatory Routing #

Training a model with 1.6 trillion parameters is a volatile process. Trillion-parameter Mixture-of-Expert (MoE) models are notorious for "numerical instability"—sudden, unpredictable "loss spikes" that can break a model during training and waste weeks of compute.

DeepSeek-V4 solves this with Anticipatory Routing. In standard MoE models, the backbone of the model and the "routing" network (which decides which experts to use) update simultaneously. DeepSeek-V4 decouples these updates. At any given training step, the model uses "historical" parameters from a previous step to calculate routing indices.

Crucially, the system includes an automatic detection mechanism that triggers a short rollback and activates Anticipatory Routing exclusively when a loss spike occurs. After the instability passes, the system reverts to standard training. This clever engineering trick allowed for massive scaling across 33 trillion tokens without the model "breaking," ensuring that the vast parameter count translates into stable, reliable intelligence.

4. From Intuition to Deliberation: The Three Modes of Intelligence #

One of the most practical innovations in DeepSeek-V4 is the ability to scale "reasoning effort" on demand. Intelligence is not one-size-fits-all; DeepSeek-V4 formalizes this into three distinct modes:

- Non-think: Fast and intuitive, designed for routine tasks.

- Think High: Engages conscious logical analysis for complex problem-solving.

- Think Max: The absolute maximum effort mode. It uses a specific system prompt that forces the model to comprehensively decompose problems, rigorously stress-test logic, and document every rejected hypothesis.

The results are tangible. In the SimpleQA benchmark, which tests factual accuracy, DeepSeek-V4-Pro-Max reached a score of 57.9%, outperforming existing open-source baselines by a staggering margin of 20 absolute percentage points. While it still trails proprietary leaders like Gemini-3.1-Pro in certain categories, it has matches or outperformed frontier models in specific retrieval and academic long-context tasks.

5. Eliminating Redundancy: The "Quick Instruction" Strategy #

Traditionally, when an AI chatbot performs auxiliary tasks—like deciding to search the web or generating a title—it requires a separate "pre-fill" step or even a secondary small model. This adds latency and consumes redundant resources.

DeepSeek-V4 introduces Quick Instruction special tokens that are appended directly to the input sequence. These tokens allow the model to execute tasks in parallel by reusing the existing KV cache. The supported tokens include:

- <|action|>: Determining if a search is required.

- <|query|>: Generating the actual search terms.

- <|authority|>: Classifying the demand for source authoritativeness.

- <|domain|>: Identifying the domain of the prompt.

- <|title|>: Generating a concise conversation title.

By bypassing the prefilling bottleneck, DeepSeek-V4 significantly reduces the "Time-to-First-Token" (TTFT). The user perceives a faster, more fluid response because the model isn’t starting from scratch for every auxiliary sub-task.

Conclusion: The Open-Source Frontier #

DeepSeek-V4 represents a shift from "static" models to dynamic systems capable of long-horizon agentic tasks. By shattering the efficiency barrier, it redefines what is possible for open-source AI. We are moving toward a future where "online learning" and persistent AI agents are the norm, rather than the exception.

If the cost of processing a million tokens has just dropped by 90%,and the architectural stability to scale to 1.6 trillion parameters has been solved, what complex problems are you finally ready to hand over to AI?