Bayesian Prior: Subjective Judgment with a Scientific Edge

Daily life is full of decisions. Dealing with a new colleague, you don’t know if they are reliable; seeing a scoop on social media, you want to forward it but fear it might be debunked; feeling a bit unwell, you weigh whether it’s worth a trip to the hospital… You need to make a judgment, and you desperately want the correct answer.

But after the previous few lectures, you might already understand: any decision must carry a certain subjective component. Setting value functions, choosing probability distributions, and using models with a certain granularity are all subjective choices. There is no absolute correctness in the world; everything involves a bit of risk.

If you agree with this, you might be a “Bayesian.”

The thinking tool for this lecture is Bayesianism. We will focus on a concept mentioned before: the “prior.”

A prior is your original view of something. It can be singular or plural. It can refer to your judgment of the probability of a specific event, your conception of a field, or your entire worldview. Simply put, a prior is your preconception.

But preconception is the starting point of cognition. And this starting point can be quantified, updated, and audited.

✵

Laypeople say: “What is your view on this?” “After this event, my opinion has changed a bit.”

Scholars say: “What is your prior for this?” “This evidence has updated my prior.”

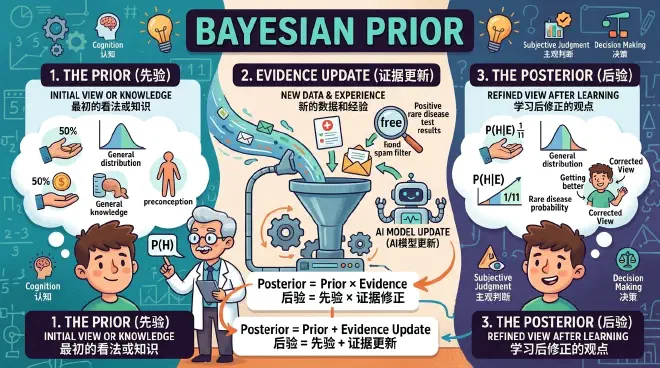

Scholars speak this way because they know the famous Bayes’ theorem. You may or may not have studied it, but you can always take a look at what the formula looks like [1] —

$$P(H|E) = \frac{P(E|H) \cdot P(H)}{P(E)}$$Every term in the formula is a probability. H represents a judgment, and E represents evidence. For example, H represents your friend having a rare disease, and E represents a positive test result for that disease at the hospital.

P(H) is the probability you judge them to have the disease before testing—the “Prior Probability.”

P(H|E) represents the probability you judge them to have the disease after having the evidence E. This is also called the “Posterior Probability.”

P(E) is the total probability of the evidence (test result) occurring under all circumstances.

P(E|H) is generally called the “Likelihood,” meaning how likely we are to see the current evidence under the hypothesis H—it examines the compatibility of your worldview with reality.

If you are not interested, you can ignore the details. Bayes’ theorem, in plain language, is one sentence: your current view (posterior) equals your original view (prior) multiplied by the correction of new evidence (likelihood/evidence). In fact, if you take the logarithm of both sides, multiplication becomes addition, and the formula looks more intuitive: Posterior = Prior + Evidence Update.

Back to the story of the rare disease. Everyone who learns Bayes’ theorem encounters this example: Suppose the incidence of this disease is extremely low, only 0.1%. We can say P(H) equals 0.1%. We also assume the hospital’s test accuracy is very high: if a person is really sick, it returns positive in 99% of cases, so P(E|H) equals 0.99; if not sick, it returns negative with 99% probability, meaning only a 1% false positive rate.

How do we calculate P(E)? Let’s use a simple method [2]. Suppose 1,000 people go for a check-up. Based on the 0.1% prior probability, only 1 person is actually sick. They have a 99% chance of being detected, so true positives are 0.99, approximately 1 person. And because of the 1% false positive rate, there are approximately 10 false positives—so the total number of positive results is 11. Then P(E) = 11/1,000.

Continuing with the intuitive algorithm or applying the formula, you will get the posterior probability, P(H|E) = 1/11.

1/11 is much larger than 0.1%, but it is still a quite small probability! In other words, although your friend tested positive with 99% accuracy, the probability they actually have the disease is only 1/11. Why?

Because it is a rare disease. The prior probability of having it is very low. If the prior is originally very low, even if the evidence is strong and increases the prior a hundredfold, your posterior probability will still not be high.

This is the composure of a Bayesian. If I believe that a football genius is an extremely rare thing, no matter how much you rave about a child in an elementary school football team being great, or that a coach thinks he is the next Messi, I won’t particularly believe it. If I strongly disbelieve in ghosts, telling me 15 ghost stories won’t make me afraid of them.

Your prior changes because of evidence, and the posterior is your updated prior.

✵

Thomas Bayes was an 18th-century figure who derived this formula in the 1740s. However, understanding Bayes’ theorem as an “update of the prior,” and even becoming a paradigm, occurred roughly around the 1920s-1930s… and has been controversial ever since.

The point of contention lies in what probability actually is. When you say “probability,” are you describing the world, or are you describing your confidence in the world?

Traditional thought, which is the probability theory you learned in high school, can be called “Frequentism,” which considers probability an objective property of things: for example, if a fair coin is tossed, the probability of getting heads should be 50%. This is an absolute number, a “True Value.” As long as you toss it enough times, the frequency of getting heads should equal that objective true probability.

But Bayesians say, who tosses a coin infinite times? The things in our lives don’t have that many opportunities for repetition. Will this candidate be elected? Will that startup fail? You won’t let life repeat ten thousand times! If an event happens only once, isn’t it absurd to talk about frequency?

“Bayesianism” believes that probability is a measure of belief (degree of belief): probability exists only in your mind and is actually a quantification of your ignorance.

Frequentists are onlookers of the world, while Bayesians are participants in the world, believing you have subjective agency: all probabilities are conditional probabilities, and you probe for evidence through action to change the prior.

Different participants have different life experiences, and naturally, different priors. Bayesianism essentially says that probability is subjective.

✵

Then you might say this is too unreliable; most people don’t have much social experience, and if they randomly state a prior, what reference value does it have? True. So my understanding is that the best way to use Bayesian prediction is: take the known frequency of this matter, or the general belief of the world or scientific knowledge, as your prior—the starting point.

Like the rare disease example at the beginning. If we know nothing about that friend, we have to assume the probability they are sick is the total incidence rate of this disease in the population, which is 0.1%, as the prior. But if we know more information about them—such as age, living environment, original health status, current symptoms, etc.—we can take that information as evidence to update that prior: for instance, if the person is young, we adjust the probability lower; if their health was already poor, we adjust it higher…

In this way, you specify the general probability.

This is very useful. For example: can you write a simple algorithm to implement a spam filtering function?

It is known that among all emails, spam accounts for 20%. You cannot directly use this frequency—after all, you cannot randomly select 20% of emails to mark as spam. What do you do?

An early classic algorithm [3] used the Bayesian method. You notice that if certain words appear in an email, it’s more like spam, such as “free”; while if other words appear, it’s more like a normal email, such as “meeting”… You can take these words as evidence. Through extensive statistics, you know the likelihood of these evidences.

Then, you only need to set the initial prior to 20%, and every time you see a new word, you do a Bayesian update: seeing the word “free,” you adjust the probability a bit higher; seeing “meeting,” you adjust it a bit lower… After analyzing the whole email like this, you get a total posterior about whether this letter is spam. If this posterior is higher than a certain standard value, you can mark it as spam.

In this way, the program is not guessing blindly but keeping an account.

Current AI Large Language Models (LLMs) are essentially giant Bayesian prediction machines. Training a model is embedding the world’s priors into its parameters—that is a very strong prior, so the model generally doesn’t speak nonsense.

When you give a model a prompt, you are inputting observational evidence, forcing it to update the posterior probability, thereby collapsing into a specific answer.

AI companies try to give models a prior that cannot be easily overturned by prompts, so that no matter how the user induces it, it can stick to certain bottom lines—this is “Alignment.” But no matter how strong that prior is, it is still a number that can theoretically be changed, which is why people always try to “jailbreak” the model…

From this perspective, aren’t each of us walking Large Language Models? Our past experiences, education, and family of origin constitute our pre-training data—our priors.

✵

Connecting this to the “Free Energy Principle” mentioned earlier, we can say the human brain is an actively predicting Bayesian inference machine. Your expectation of the environment is your prior, and the surprises you receive are evidence.

For example, you pick up a cup to drink water. The cup is right in front of you; you have a pre-estimated position, weight, and general feel for it—this is your prior. You make the reaching motion based on the prior. When your fingertips touch the cup, if the sensation is different from what you expected—for instance, the cup is extremely hot—you experience a surprise, which serves as new evidence. Now you must update the prior and then issue commands to change your action, pulling your hand back…

The sequence is very clear: we always predict first, then receive feedback, then adjust. We don’t start from total darkness; we must have a prior first. As the saying goes, even a wrong map is better than no map.

If the evidence contradicts the prior, Bayes’ theorem requires us to update the prior—but the Free Energy Principle says that besides updating the prior (perceptual inference), you have another option: active inference. You can change the environment to make it fit your prior. If I thought the room was warm but it’s cold, do I just let it be cold? I can certainly turn up the heat.

But regardless of what you do, when the evidence doesn’t match your prior, you must do something. You cannot remain indifferent. As John Maynard Keynes famously said: “When the facts change, I change my mind. What do you do, sir?”

✵

All of this sounds logical, but some people’s cognition is exactly not like this. People often make two types of mistakes.

The first mistake is having no prior at all—that is, not pre-setting any stance and looking purely at evidence.

If you think having no stance is scientific, you are gravely mistaken. You cannot give the same prior probability to “the sun rises tomorrow” and “the sun explodes tomorrow,” nor do I suggest you have the same psychological preparation for pickpockets in Paris as in Beijing. Having no prior means that seeing a news story today makes you believe A, and seeing another tomorrow makes you believe B; today the social feed says a company is about to crash and you liquidate, tomorrow you hear someone is buying the dip and you rush back in… Leftist today, rightist tomorrow.

Blowing with the wind is not humility, but unpredictability as a person—who can count on you then?

The second mistake is having an unshakable prior. Simply put, you set the prior probability directly to 0 or 1 as absolute truth. The formula will then say that no matter how strong the evidence is, your prior will never change.

Don’t do that. A true Bayesian never sets the probability to 0 or 1. This is called “Cromwell’s Rule,” from a 1650 letter by British political leader Oliver Cromwell to the Church of Scotland, in which he said: “I beseech you, in the bowels of Christ, think it possible you may be mistaken.”

Leave some room for “possibly being mistaken.” Even if you believe something very, very strongly, you can always set its prior probability to 99.999%, but don’t set it to 1.

✵

The basic operating mind-hack of Bayesianism is simple: first set a prior based on environmental parameters, then take specific events as evidence to update the prior.

How to judge if that new colleague is trustworthy? You should first consider the environment you are in: if the local area has always been treacherous, you should set a low prior; but if you are in a very good community, you can start with a high prior. But a prior is just a prior; you must allow others to prove themselves. Do one or two specific things with this person as evidence to update the prior.

Traditional wisdom sometimes says “human nature is inherently good,” and sometimes “one should always be wary of others”; sometimes “one may know a person’s face but not their heart,” and sometimes “a prodigal son who returns is more precious than gold”… These sayings sound contradictory, but when viewed through the framework of Bayes’ theorem, they become crystal clear.

The prior is both our wealth and our prison. Evidence is not an absolute brick of truth, but the currency of belief transactions.

Every experience is a process of buying more accurate beliefs with evidence.

If our course can slightly change your prior about the world, that would be my greatest honor.

Notes

[1] As long as you have studied a bit of conditional probability, this formula is easy to prove: move P(E) to the left, and both sides are essentially saying “the probability of H and E occurring simultaneously.”

[2] The rigorous algorithm is P(E) = P(E|H)P(H) + P(E|¬H)P(¬H).

[3] Sahami, Mehran, Susan Dumais, David Heckerman, and Eric Horvitz. 1998. “A Bayesian Approach to Filtering Junk E-mail.” In AAAI Workshop on Learning for Text Categorization.