Granularity and Causal Mediation: How to Think with Models

Correct decision-making requires a correct understanding of things. Let’s start with a common misconception.

A piece of street philosophy often circulates online: “If you want to avoid cancer… you must let go, be mentally tough like a bastard… don’t suppress your feelings… don’t be so twisted inside. This is how to reduce the occurrence of cancer!”

Many people believe that cancer is “caused by anger,” but I must say, that is pseudoscience.

The American Cancer Society (ACS) has a dedicated science-education page [1], explicitly stating that research shows a person’s personality, thoughts, and emotions do not cause cancer, nor do they affect whether cancer recurs. The National Cancer Institute (NCI) is slightly more conservative [2], saying there is “no clear link” between stress and cancer.

In fact, a series of large-scale longitudinal studies has long existed. For instance, a study of over 100,000 British women [3] found that the frequency of stress and adverse life events was unrelated to breast cancer risk. A massive meta-analysis of over 110,000 people [4] showed that after controlling for factors like smoking and alcohol consumption, so-called “job strain” had no correlation with the risk of any major cancer. Further research [5] found that anger control and negative affect generally had no significant correlation with cancer risk.

You might say, “Wait, doesn’t the ‘Elite Daily Lesson’ column often talk about the various harms of stress on health? Why doesn’t stress cause cancer?”

Scientists explain this clearly. Those studies say that stress does not directly cause cancer—but stress may have an indirect relationship with cancer. For example, stress might lead you to develop unhealthy lifestyle habits, such as smoking, drinking, or overeating, which do increase the risk of cancer.

These are two very different stories. Understanding the difference between these two stories is essential for learning how to control complex systems.

Simply put, to manipulate a thing, you must build a “model” of that thing in your mind.

✵

Let’s first look at what happens without a model. Imagine the manager of a university dormitory who detests noise in the building after lights-out. If he knows nothing about the dormitory’s situation, his only response to noise is to go out and shout for everyone to be quiet, perhaps even knocking on every door.

However, if the manager has a model of the dormitory in his head—knowing, for example, that Room 305 has a subwoofer, the student in Room 117 loves playing online games after lights-out, and the water pump on the second floor has been broken lately, causing everyone to wash up slowly—he doesn’t need to engage in impotent rage. Instead, he can intervene precisely based on the type of noise.

In reality, many people are like that dormitory manager: they drink goji berries when their health metrics are poor, demand overtime from employees when a project is delayed, or lash out at their children when grades slip… you don’t know what’s actually happening, but you feel compelled to take drastic action.

A model is a structural mirror of a thing in your mind, helping you see the underlying mechanisms of its operation. Without a model, you are facing a black box, only able to react impulsively to input signals; with a model, you know where the buttons are.

In the field of cybernetics, there is a profound saying called the “Good Regulator Theorem,” proposed in 1970 by Roger C. Conant and W. Ross Ashby [6]. Its meaning is simple:

“Every good regulator of a system must be a model of that system.”

This theorem essentially proves mathematically that your cognitive structure must be isomorphic to the thing you want to control. If your model is far simpler than the real world—for example, explaining complex biology with “cancer is caused by anger”—you will inevitably lose critical information, and your decisions will inevitably be wrong.

Simply put: you can only control something to the extent that you understand it.

What should a good model look like? We have at least two requirements.

✵

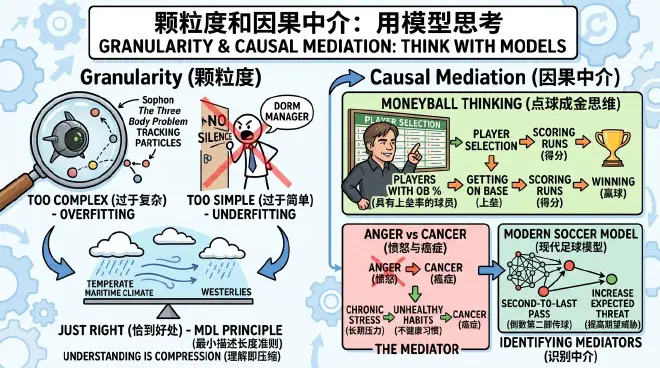

The first requirement is that the complexity of the model—what white-collar workers love to call “granularity”—must be just right. Too simple and coarse is bad, but too complex is also bad. If you try to track every particle in the world like a “Sophon” from The Three Body Problem, your computational power will be overwhelmed, and you’ll end up focusing on useless details.

A model must be a compression of the real world, and this compression must be just right. You’ve long heard of “Occam’s Razor”—“entities should not be multiplied beyond necessity.” Information theory has a principle that essentially turns Occam’s Razor into a calculable criterion, called “Minimum Description Length (MDL)” [7].

In short, the MDL criterion requires that the best model is the one that minimizes the sum of two terms:

- Model Length: The number of bits required to describe the model itself—how complex your model is.

- Data Patch Length: How many extra explanations are needed if others use your model to explain reality.

The best model is the one that explains the most data with the shortest code length.

For example, if someone asks what the local weather is like: if you describe the weather for every single day over the past year, it’s too long; without compression, there’s no understanding. This is called “overfitting.” If you just say “it always rains here,” it’s too short; that’s “underfitting.”

A “just right” description would be: “This area has a temperate maritime climate, controlled by the westerlies. It’s mild and humid year-round. Although there are many overcast and rainy days, it’s mostly drizzle, and heavy storms are rare.” In just a few dozen words, there’s a pattern, a mechanism, and it represents a lot of data. This is a good model.

Thinking with models requires the skill of discarding details to reach the essence. Sometimes, by maintaining a certain psychological distance from a thing, your level of explanation is actually higher [8] because you are better able to abstract and compartmentalize the problem.

Why is the stock market falling recently? If you look at online discourse, you could list 100 reasons: central bank interest rate hikes, geopolitics, a certain company’s earnings report, the comments of a famous influencer… a pile of noise that leaves you confused. A master’s model is always highly compressed: “Tightening liquidity leads to the repricing of risky assets.” This one sentence explains 80% of the volatility.

There is a maxim: “Understanding is Compression.” Master this skill, and you will be the legendary person who “sees through the essence of things in half a second.”

✵

The second requirement for a good model is that it contains causal relationships. With causality, you can explain the mechanism of things.

We aren’t talking about philosophy here—from a purely philosophical perspective, whether true causality exists in the world is debatable. We use the insight of Turing Award winner Judea Pearl from his book The Book of Why [9]: we don’t need to fully explain absolute causality; what we really want to know is: what action can I take to intervene in the occurrence of this thing?

Two things that often happen together may not have a causal relationship; it might just be correlation. However, if I forcibly intervene (Pearl’s term is the “do-operator”)—unilaterally making event X happen while keeping all other factors constant—and the probability of event Y increases, then I can say X has a causal effect on Y, or at least X is a cause of Y, denoted as X → Y.

For example, every morning the rooster crows and the sun comes out. That’s a correlation. To know if there’s causality, you must perform an intervention: one day you forcibly intervene, muzzling the rooster, and see if the sun still comes out. If the sun rises as usual, it means there is no causal relationship between the rooster crowing and the sun rising.

Conversely, if you walk into a dark room and, purely of your own free will, unilaterally and suddenly flip a switch and the light turns on, we can say there is a causal relationship: Switch → Light On.

Your model should contain several causal relationships, ideally with variables linked by arrows in a diagram to clearly display the mechanism of the whole affair.

✵

Now comes the crucial insight: no model can exhaust all causal relationships in a thing. Too many is bad, too few is bad; reaching the Minimum Description Length is what matters.

As we mentioned earlier, a vast amount of research indicates that the correlation between anger and cancer is very small: many people get angry frequently without getting cancer, and many who don’t get angry do get cancer. But if you force a correlation, some studies indeed show that angry people have a slightly higher probability of developing cancer than those who aren’t. But if you say “Anger → Cancer” based on this, it’s irresponsible.

This chain is too coarse and the effect too fragile. The responsible approach is to see if there are indirect effects, so-called “Mediators”: what does anger cause, and then what does that cause to lead to cancer?

The National Cancer Institute explicitly points out [2] that chronic psychological stress may make people more prone to unhealthy lifestyle habits, including overeating, lack of exercise, smoking, and alcohol abuse. These are the more definite cancer risk factors. So the more accurate causal chain is:

Anger → Unhealthy Lifestyle Habits → Cancer

Those studies say that if you exclude the influence of these unhealthy habits, there is almost no relationship between anger and cancer.

By identifying the mediator of “unhealthy lifestyle habits,” things become actionable: even if your mood is poor, if you still persist in exercising and controlling your diet, you won’t get cancer because of “anger.”

With a good model, you can seize the control points.

✵

Let’s look at a classic case from Michael Lewis’s book Moneyball [10].

Baseball has been popular in the US for many, many years, but for a long time, the “models” of baseball people were very coarse. Scouts believed that Star Players → Winning: you have to buy players who can hit home runs, are handsome, and have graceful postures… and this made players who fit that image extremely expensive.

Around 2002, Billy Beane, the General Manager of the Oakland Athletics, was forced to realize a new baseball model because he had no money: Winning requires scoring runs, and scoring runs requires getting on base!

(Hitting + Eye for the Ball) → Getting on Base → Scoring Runs → Winning

Getting on base is the critical, overlooked mediator: whether you hit your way on, run your way on, or are walked after being hit by a pitch, as long as you get on base, you create a scoring opportunity. And in the market, those players who were “ugly, had weird stances, couldn’t hit home runs but were great at getting on base” were as cheap as dirt.

So Beane bought a group of players despised by traditional scouts… and the result was a record-breaking 20-game winning streak with a minimal payroll.

Many say this was the victory of “Big Data”—but what is Big Data? Without a model, how do you know how to analyze data? This was a dimensional strike of causal models against intuition.

Now, let’s apply the Moneyball thinking to soccer. What do you think is the “getting on base” of soccer? You might think of “assists,” the final pass before a goal. But this model is too simple; commentators and fans have long known the value of assists, and they have their own rankings… there is no price premium there.

Modern soccer experts use a model where the real killer move is often not the shot or the final pass—but the second-to-last or third-to-last pass, or a dribble: it’s the action that tears open the defense and changes the probability structure of the field [11].

Initially, the offense and defense are balanced. A player among the crowd seemingly makes a casual pass, moving the ball from a low-threat area to a high-threat area, and the goal probability suddenly jumps from 2% to 30%. The model says this pass increased the “Expected Threat (xT).”

Don’t just watch the finish line; watch the mediators.

✵

Let’s look at a few small application cases to help you get a feel for it.

Take buying a phone, for example. Some say, “I just want the newest, most expensive iPhone,” or “I’ll just buy something cheap.” These are models that are too short. Others spend days researching phone specs, comparing prices, and reading reviews, but never end up buying one. That model is too long.

The MDL criterion requires just enough information. First, think about what you’re buying the phone for: are you just taking casual photos of your kids, or do you need to take night shots? Are you playing games or just watching short videos? Consider only a few key variables, compress the model to its simplest form, and only then can you make an accurate decision.

Or take learning. When a child’s grades are poor, parents shout, “Why aren’t you working hard?” But learning has many steps. Which step is the problem? You need a model to analyze. Let’s use the simplest model:

(Time + Attention) → Memory Encoding → Retrieval → Grades

If the investment of time and attention is insufficient, the child indeed isn’t working hard. If there’s a problem with memory encoding, the teacher hasn’t taught well or the materials are wrong. If it’s a retrieval problem, there’s insufficient practice. Some parents only know how to focus on study time because they can only understand “time.”

Then there’s company management. Some bosses love long models, with detailed regulations for every possible scenario; employees simply can’t execute them. Some bosses love short models, even saying “I just want results, you figure it out…”

Good management should first set a few core values to cover complex scenarios as much as possible. Then, build causal relationships for the company’s core business: if the end point is revenue, then the mediators might be retention, conversion rate, channel quality, product experience, delivery speed; and further back, team collaboration, decision efficiency, information flow, etc.

If you only focus on revenue, you’ll only do short-term promotions, which is underfitting. If you focus on all those mediators and require daily reports for each, you’ll be overwhelmed and fall into overfitting.

The MDL criterion requires you to find one or two of the most critical mediators to intervene in—those are the “getting on base” and “threat passes.”

✵

High-level decision-makers know what they should be looking at. They use the Minimum Description Length to compress things into a model. They insightfully understand the mechanisms, draw causal diagrams, and ensure they discover the key mediators.

In truth, there is no absolute standard for granularity. How detailed a model should be is determined by your subjective needs. And you will always encounter surprises on the ground.

There is a maxim: “The map is not the territory.” Turning the world into a model is always dangerous, but if you don’t even have a map, you can’t even get on the road.

Notes

[1] American Cancer Society. “Do Feelings and Attitudes Have an Effect on Cancer?” Updated November 21, 2025. https://www.cancer.org/cancer/coping/attitudes-feelings-and-cancer.htm

[2] National Cancer Institute. “Stress and Cancer.” https://www.cancer.gov/about-cancer/coping/feelings/stress-fact-sheet

[3] Schoemaker, Minouk J., et al. “Psychological stress, adverse life events and breast cancer incidence: a cohort investigation in 106,000 women in the United Kingdom.” Breast Cancer Research 18, no. 1 (2016): 72.

[4] Heikkilä, Katriina, Solja T. Nyberg, Tores Theorell, Eleonor I. Fransson, Lars Alfredsson, Jakob B. Bjorner, Marianne Borritz, et al. “Job Strain and the Incidence of Cancer: An Individual-Participant Meta-Analysis of 116,000 European Men and Women.” BMJ (British Medical Journal) 346 (2013): f165.

[5] White, V. M., et al. “Is Cancer Risk Associated with Anger Control and Negative Affect? Findings from a Prospective Cohort Study.” Psychosomatic Medicine 69, no. 7 (2007): 667–674.

[6] Conant, Roger C., and W. Ross Ashby. “Every Good Regulator of a System Must Be a Model of That System.” International Journal of Systems Science 1, no. 2 (1970): 89–97.

[7] Grünwald, Peter D. The Minimum Description Length Principle. Cambridge, MA: MIT Press, 2007.

[8] Trope, Yaacov, and Nira Liberman. “Construal-Level Theory of Psychological Distance.” Psychological Review 117, no. 2 (2010): 440–463.

[9] Pearl, Judea, and Dana Mackenzie. The Book of Why: The New Science of Cause and Effect. New York: Basic Books, 2018. Note: The “Elite Daily Lesson” column has decoded this book: “Insights into Three Types of Causal Thinking.”

[10] Lewis, Michael. Moneyball: The Art of Winning an Unfair Game. New York: W. W. Norton, 2003.

[11] Singh K., Introducing Expected Threat (xT): Modelling team behaviour in possession to gain a deeper understanding of buildup play [Blog post]. 2018-12-24. https://karun.in/blog/expected-threat.html