Probability Distribution: What Exactly is Decision-Making?

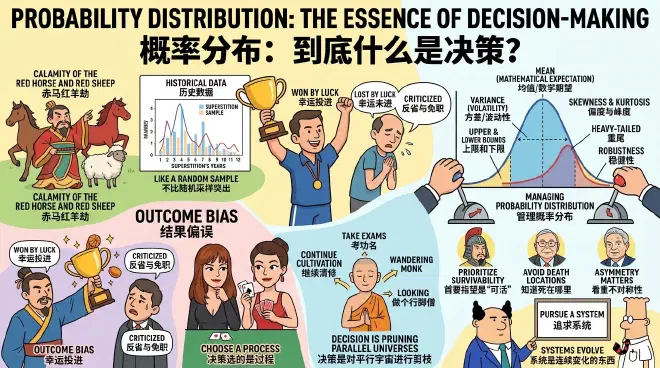

Have you ever heard of the folk superstition known as the “Calamity of the Red Horse and Red Sheep”? It suggests that 2026 (the Year of the Bing-Wu Horse) and 2027 (the Year of the Ding-Wei Sheep) combine to form the “Red Horse and Red Sheep,” and according to certain apocryphal prophecies, every time these two years occur, the nation faces catastrophe.

Looking back, the last “Red Horse/Red Sheep” period was 1966–1967, which saw the start of the Cultural Revolution. Before that, 1906–1907 brought the political turmoil of the Qing Dynasty’s constitutional preparations. Even further back, the “Jingkang Incident” of the Northern Song Dynasty happened during such years! It seems like trouble always follows, right? Does Chinese history truly possess such a mysterious cyclical law?

In truth, you don’t need to understand mysticism to debunk this, as it is easily verifiable. I asked GPT to list what happened in Chinese history during every “Red Horse/Red Sheep” period over the last two millennia, scoring each based on the severity of the disasters.

…

Indeed, some of those years saw great calamities. However, many others saw only minor events or nothing at all. You must realize that ancient China was inherently prone to disaster; it was either experiencing a catastrophe or brewing one. The real question is: were these specific years significantly worse than others?

GPT listed the major periods of upheaval in Chinese history—such as the War of the Eight Princes, the Sixteen Kingdoms, and the An Lushan Rebellion—according to mainstream textbooks. Out of 34 sets of “Red Horse/Red Sheep” years, 13 fell within these major periods of upheaval, accounting for 38%. Then came the crucial part: if you randomly sample any 34 sets of consecutive two-year periods across those two millennia, the probability of them falling within major upheavals is approximately 37%.

In other words, the likelihood of major upheaval during the “Red Horse and Red Sheep” years is no more prominent than a random sample.

So, do you still believe in the superstition?

This sampling verification we just performed is entry-level “Decision-Maker Thinking.” The “entry point” is realizing that you cannot judge things based on a single result; you must look at the statistics.

Decision-making is not about a single outcome; it is about a “probability distribution.” This is our mental tool for this lesson.

✵

The average person’s mindset is to “judge heroes by success or failure.” In a high-stakes basketball game with a tied score: if the team luckily sinks a basket at the buzzer to win by one point, everyone is happy—the coach and players are celebrated as heroes. But if that same shot misses and they lose by one, everyone is forced into “self-reflection,” and the coach might even get fired. This is a common scene, but don’t you find it absurd?

You should judge a coach’s ability based on the results of many games and a comparison of the team’s performance before and after their arrival. Only through statistical synthesis can you know if the coach is competent. In reality, people rarely have such patience. Someone says drunk driving is dangerous, and another responds, “I drove home completely wasted last time and nothing happened.” You say you can’t rely on the lottery to get rich, and someone points out, “But someone just won millions last week.”

Evaluating the quality of a decision based on the immediately visible result is what psychology calls “Outcome Bias” [1]. Cognitive psychology PhD Annie Duke calls it “Resulting.” Duke is a professional poker champion who argues in her book Thinking in Bets [2] that life is more like poker than chess: your results are often determined not by your strategy, but by the hand you were dealt.

If you want to evaluate whether a strategy is good, you cannot look at one or two results; you must look at the aggregate statistics.

For most people, “statistics” means finding the “consolidated volumes” of history, which requires too much computational power and long-term memory. Thus, they prefer to be swayed by short-term impacts and information completely irrelevant to the decision.

As a result, we see the boss who gambled recklessly but hit a favorable market trend hailed as “decisive and bold,” the CEO who loses more than they win but tells a great story dominating the headlines, while the person who performed a rigorous deduction but met an accidental, crushing defeat is ruthlessly sacrificed.

If we always prioritize the most visible and story-rich results, we are no different from gamblers and will never learn the true skill of decision-making.

✵

The more you understand decision science, the less you expect from a single decision: decision-making is a very limited act. In the previous lesson, we discussed the “No Free Lunch Theorem,” knowing that decisions cannot be absolutely objective or neutral; you must inject subjective factors and take active risks. In this lesson, we go a step further: what you truly decide is a process, not a result.

Or, more strictly, what you decide is what to discard.

The word “decision” comes from the Latin root decidere, which means “to cut off” or “to kill.” Faced with a pile of options, your decision is not just about which one to choose, but about “killing” those other options—even if they are equally beautiful, perhaps more correct, or more tempting—to bet on one that is ultimately uncertain.

Imagine a monk in a remote mountain facing a life decision with three options: first, continue his quiet cultivation in the mountains, hoping to achieve enlightenment; second, descend the mountain to take the imperial exams and become an official; third, become a wandering monk, traveling the world and visiting famous mountains and rivers.

If you think he is choosing between being a high monk, a high official, or a world traveler, you are mistaken. Reality is full of uncertainty: staying in the mountains doesn’t guarantee enlightenment, taking the exams doesn’t guarantee passing, and wandering might lead to an early death on the road. Making a decision is not “pre-ordering” a future.

Decision-making is the pruning of parallel universes.

Choosing the “exams” means killing the peace of the “cultivation” universe and the freedom of the “wandering” universe—those are the opportunity costs. Then, you must accept everything that comes with the “exams” universe: perhaps you become a great official, but perhaps you fail for years, or become a lowly clerk, or get caught in political strife, and so on.

Decision-making is a violent act. It is the elimination of options that look good, the abandonment of potentially better possibilities, and the payment of opportunity costs—without any guarantee that your chosen path will be better. This is why decision-making often feels painful, leading many to hesitate indefinitely.

✵

You might say, “I get it: decision-making is picking probabilities; we should pick the option with the best mathematical expectation!” That is still not quite rigorous. In fact, decision-making is about choosing a “Probability Distribution.” To consider a decision, we need to look at at least these parameters:

- Mean (Mathematical Expectation): On average, how much do you win by taking this bet?

- Variance: Is the situation highly volatile or stable?

- Upper and Lower Bounds: What is the most you can win, and what is the most you can lose?

- Skewness: Is the probability of winning higher than the probability of losing?

- Kurtosis: Is it a “heavy-tailed” distribution? If so, the probability of extreme events is high.

- Robustness: If the environment changes, will this system collapse?

Take the monk’s case again:

- Staying in the mountains: The lower bound is an anonymous, poor monk; the upper bound is a legendary master. But since a master’s life is also simple, the variance is small and extremely stable.

- Descending for exams: The variance is massive. The upper bound is a high official, and there is a heavy tail: you use power to get money, then money to get more power. But there is also massive downside risk; the lower bound is terrible. Most often, you simply fail, so the overall average is not high.

- Wandering: The randomness is too strong. It could end in failure or a miracle. We simply don’t know what’s on the road; more information is needed.

Decision-making is managing the distribution of your future. You are gambling with your understanding of the world: at the very least, you must think about expectation, variance, tail risk, and robustness.

Once you understand this, the primary goal of decision-making is not “victory,” but “survivability.”

✵

Sun Tzu’s Art of War says: “The skillful fighters of old first put themselves beyond the possibility of defeat, and then waited for an opportunity of defeating the enemy. To secure ourselves against defeat lies in our own hands, but the opportunity of defeating the enemy is provided by the enemy himself.” Charlie Munger said: “All I want to know is where I’m going to die, so I’ll never go there.” This means first checking if you can accept the lower bound of the future result, then considering if the upper bound is worth the effort.

Nassim Nicholas Taleb said: “Never cross a river that is on average four feet deep.” This means you can’t just look at the mean; you must manage tail risk and see if there are extreme cases in the distribution.

George Soros said: “It’s not whether you’re right or wrong that’s important, but how much money you make when you’re right and how much you lose when you’re wrong.” This is even more fundamental: the key is not how many times you are right, but whether there is an asymmetry between your wins and losses such that your mathematical expectation is profitable.

Decision-making must involve risk, but it is never blind risk. We must manage risk as much as possible, ensuring that the upside is large, the downside is small, and tail risks are controlled. Ideally, a master should avoid high-variance gambles and instead use low-volatility, repeatable processes to accumulate victories. This is why “the skillful fighter wins without flashy achievements.”

People with good “decision temperament” do not fret over the gain or loss of a single battle. As long as the process is correct, losing once or twice doesn’t matter; if the process is wrong, winning is just a fluke.

Scott Adams, creator of the Dilbert comic series, once had a great saying [3]. He said if you are going to do something for a long time, you shouldn’t pursue a specific goal; you should pursue a “system.” A system is something continuously evolving—a skill, a relationship, etc.—where many projects can emerge. You don’t care about the success or failure of a specific project, but whether your system is good enough.

For instance, Adams’ writing is a system. Where the next piece is published, how viral it goes, or how much it earns doesn’t matter. The system doesn’t even have a clear goal—what matters is that it is always growing and that the probability distribution it outputs is getting better and better.

With a mature system and a fully optimized probability distribution, individual success becomes a natural byproduct.

✵

Finally, let’s simulate a few scenarios for a decision-making drill.

Question 1: Suppose a friend is seriously ill with a tumor. Should they choose conservative treatment or surgery? You consult a doctor and find the distributions for the two options:

- Conservative Treatment: Narrow distribution; almost certain to live for 5 more years with moderate quality of life, but unlikely to live longer.

- Surgery: Bimodal distribution; 30% chance of immediate death from failure, 70% chance of a complete cure.

This information is actually sufficient; you just need to calculate life expectancy. If the patient is 80 years old, conservative treatment is clearly the best choice—living 5 more years at that age is quite good. But if the patient is only 20, the surgery is well worth the gamble.

Question 2: You have two job offers: one as a “cog in the machine” at a Big Tech firm, and one as a “jack-of-all-trades” at a startup.

- Big Tech: High salary and stable. But there is a left-tail risk: specialized skills mean if you are laid off, it’s hard to find the next job.

- Startup: Lower base salary, and the company might collapse. But you learn everything, gaining versatile skills. And there’s a small right-tail opportunity: if the company makes it, you become wealthy.

How to choose? it depends on your personal situation. If you have a mortgage and a new baby, you need low variance—go to Big Tech. If you are young, unattached, and have nothing to lose, you can chase the heavy tail at the startup.

Question 3: Someone tries to sell you insurance. You know that, given the probability of the risk event, the mathematical expectation of the loss is lower than the premium—otherwise, how would the insurance company make money? So, do you buy it?

The answer depends on whether you can afford the loss. The probability of a house fire is extremely low, and most people will never use their home insurance—but I still recommend buying it, because if it does happen, you can’t afford it. The same goes for critical illness insurance. But whether you should buy extended warranties for a TV or car insurance beyond the mandatory minimum is a different story.

You see, we aren’t weighing one result against another; we are accepting a future probability distribution. Often, decision-making is not about how good the best-case scenario is, but how bad the worst-case scenario is.

✵

When you learn to focus on probability distributions instead of results, your temperament changes.

You won’t be ecstatic over a fluke victory, nor will you collapse over a failure following a correct strategy, because you know that as long as the sample size is large enough, the Law of Large Numbers will eventually bring you back to the height you deserve.

You embrace uncertainty but avoid catastrophic tail risks like the plague. You place extreme importance on systems and rules because systems are the only tools that ensure a robust probability distribution, while human rule is full of randomness. You believe that procedural justice is the long-term optimal solution. Your emotions remain stable.

A high-level decision-maker is like a Stoic archer: you pull the bow and aim with all your might—that is the process you can control. Once the arrow leaves the string, whether it hits the bullseye, you maintain a certain calm indifference—because that is a sampling of wind and noise.

Notes

[1] Baron, Jonathan, and John C. Hershey. 1988. “Outcome Bias in Decision Evaluation.” Journal of Personality and Social Psychology 54 (4): 569–579.

[2] Duke, Annie. Thinking in Bets: Making Smarter Decisions When You Don’t Have All the Facts. New York: Portfolio/Penguin, 2018.

[3] Elite Daily Lesson, Season 1. Scott Adams passed away in January 2026. We discussed his ideas many times in the column and miss him dearly.