No Free Lunch Theorem: Impermanence and the Necessity of Bias in Decision-Making

In this section, we enter the second module: Decision-making and Judgment. Simply put, it’s about how to discover the best options, evaluate situations, and finally make the call. You’ve likely heard many theories about decision-making, but the mental tools here will provide deeper insights—starting with the “meta-rule” of all decisions.

The rule is this: there is no such thing as a perfect decision.

People love to fantasize about perfect decisions. For instance, in Romance of the Three Kingdoms, Zhao Yun protects Liu Bei on a journey to Eastern Wu. Before they leave, Zhuge Liang gives him three silk pouches, saying he should open one whenever he faces a crisis. It works perfectly. Who wouldn’t want a brilliant strategist or a secret "silk pouch" tip?

Unfortunately, the real world is not a novel. Both Qin Shi Huang and Zhu Yuanzhang tried to design long-term top-level systems for their descendants, but reality quickly outpaced their imagination. Yet, we haven’t learned the lesson.

We want "life algorithms." We want a course that tells us exactly which major to choose or how to make money. We suggest using Big Data for the "optimal solution." We hope AI will provide the best decision options. Even if those are impossible, we at least demand that decisions be "objective and neutral": stripping away all emotions and personal biases, calculating the correct answer like a math problem.

I must say, all of this is a delusion.

There’s no such thing as a "free lunch." Whether it’s Zhuge Liang, Qin Shi Huang, or Zhu Yuanzhang, you cannot pre-arrange everything perfectly. There are no life algorithms, and no universally "correct" decisions.

Furthermore, demanding "objectivity and neutrality" is a fundamentally flawed requirement.

If you insist on being perfectly objective and neutral, you won’t be able to do anything. No decision can be truly objective. I say this because there is a strong theoretical support called the "No Free Lunch Theorem" (NFL).

✵

The No Free Lunch Theorem comes from optimization theory in computer science, proposed in 1997 by two computer scientists, David Wolpert and William Macready [1]. I sincerely hope that whether you study philosophy or social sciences, you develop some cross-disciplinary awareness and learn a bit about computer science. This theorem is profound; it essentially sets a limit on intelligence.

Here’s the story. Back then, the computer science community was like a martial arts tournament, with everyone proposing their own "best" algorithms. Some said their genetic algorithms, modeled on biological evolution, could find engineering solutions. Others claimed their simulated annealing algorithms, derived from physics, could help jump out of local optima. Everyone wondered: can we find a "Dragon-Slaying Saber"—a single algorithm to solve a wide range of problems? Or more simply, is there a best, universal algorithm that solves everything?

Wolpert and Macready’s theorem said: No. They proved mathematically that if you average across "all possible problems," any two algorithms perform exactly the same.

In other words, if an algorithm performs exceptionally well on a specific class of problems, it must inevitably fail on others. Overall, if you seek to solve all problems, an advanced chip-routing algorithm is no better than random guessing.

This is why it’s called "No Free Lunch": to perform better than other algorithms in one domain, you must perform worse in another. AlphaGo is brilliant at Go because it is "biased"—it was highly optimized for that specific problem. If you ask it to paint, it fails completely.

You must pay a price for optimization.

✵

Buddhism enthusiasts should reflect on the No Free Lunch Theorem. I personally believe it declares that any "conditioned dharma" (active methods) is inherently "tainted" (limited): it cannot solve all problems and is inherently localized.

If you say "All conditioned phenomena are like a dream, an illusion, a bubble, a shadow," the theorem adds that perfection does not exist in this world. Doing nothing is the only "perfection." If you have any pursuit, you must use conditioned methods, which means being "tainted," paying the price for optimization, giving up some things to get others, and enduring the "suffering of the tainted."

Thus, the "No Free Lunch Theorem" is the scientific version of "All conditioned things are impermanent."

That’s a side note, but the key point is: to engage with the world, you must use limited (tainted) methods.

The good news is that you can choose which class of problems to solve—and this choice is inherently subjective. How do we choose? We need to borrow a term from machine learning: "Inductive Bias."

Learning is about inducing infinite future laws from finite past experiences. But David Hume (1711–1776) famously argued [2] that induction is logically unjustifiable: just because the sun rose yesterday and the day before, why should you assume it will rise tomorrow? What if the parameters of the universe change?

For a machine to learn, it must first bridge this logical gap by blindly believing in something—these are called "Priors." You must believe in something without a reason to learn from finite experience.

For example, Convolutional Neural Networks (CNNs) are great at image processing because they have an implanted prior: they believe "local features are meaningful when combined" and "image features are roughly invariant under translation." Recurrent Neural Networks (RNNs) are good at language because their prior is that "the previous word and the next word are temporally related."

From an absolutely objective and neutral standpoint, these priors are baseless. Why should you believe our world works this way? What if the world suddenly becomes chaotic? But we don’t care—we’d rather have the bias. These pre-set priors are "Inductive Bias."

Without these biases—observing the world without any "pre-set glasses"—you would see only a matrix of dots and a stream of noise. Massive data would make the algorithm think every possibility is equally likely, leading to paralysis.

You must bet on structure to find structure and judge it.

✵

Decisions are the same. Thinking you can sit there, pull up all world variables, perform precise simulations, calculate all probabilities, and have a "guidebook" tell you exactly what to do… is pure fantasy, because it violates the No Free Lunch Theorem.

The reality is that there are infinite things to do, and success is possible in every field. You must first subjectively choose a domain and set your inductive bias for that domain before you can conduct specific research and calculate the best decision.

Suppose one day you have a sudden thought: "I want to go to Guangzhou to make money." This isn’t actually a decision; it’s an "aspiration" (vow). But this aspiration is crucial; without it, there is no decision.

The world is huge. People can do many things in many places for many purposes. You chose Guangzhou to make money. No scientific decision theory can prove this choice right or wrong. Someone else might go to Guangzhou to be with their girlfriend, or make money elsewhere. They are also right.

"Going to Guangzhou" narrows your search space; "making money" defines your objective function; and your intuition/belief that "Guangzhou offers money-making opportunities" is your inductive bias. With this aspiration, you can start making decisions: we know what you want to do, so we research which job in Guangzhou best helps you make money.

The No Free Lunch Theorem says: No matter how rational the decision-making process is, the initial intent is always "arbitrary" (任性).

In fact, arbitrariness is an essential ingredient of decision-making.

No decision system can foresee everything. Even the strongest AI cannot list the probability distributions and costs of every option so clearly that you just have to "pick one." The future contains not only uncertainty but unquantifiable uncertainty—even the probability distributions themselves are uncertain.

This means that in the end, there will always be parts you can’t calculate. You will eventually reach a moment where you think: "Whatever, I’m just going to do it this way." As the French philosopher Jacques Derrida put it:

"A decision that did not go through the ordeal of the undecidable would not be a free decision, it would only be the programmable application or unfolding of a calculable process." [4]

Deciding is not an unfolding; it is an undertaking (bearing the burden). This is why, no matter how strong AI gets, many "micro-decisions" must still be left to humans.

✵

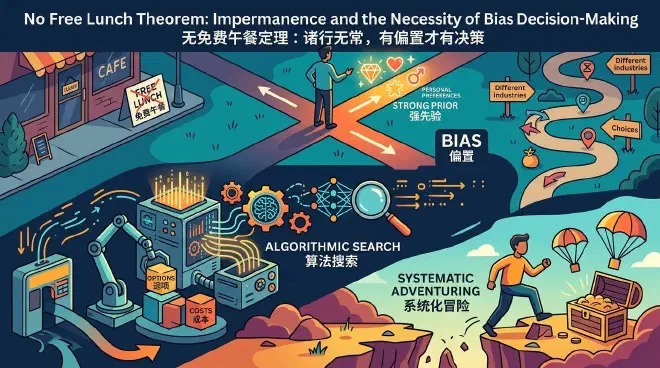

Admitting there’s no free lunch and understanding that decisions are essentially subjective adventures doesn’t mean we should abandon decision science. Its role is limited, but often critical. Let’s look at the "meta-cognitive" mindset for making decisions, in three steps.

Step 1: You must have a "Strong Prior"—set your bias.

Why are you doing this business instead of thousands of others? Why this industry or track? There is no objective answer. Faced with an injured person, a hitman’s strategy is the opposite of a doctor’s. You must make a subjective choice.

Maybe it’s intuition, a life vision, or a mysterious preference—a pure, irrational bias—but you must choose a direction first. If you don’t, you can’t use algorithms; mathematically, you’re at a dead end. Even a wrong map is better than no map.

But bias isn’t random; it mostly comes from two sources:

One is your Values—things that are non-negotiable. What will you never do? What price will you never pay? What do you insist on pursuing? What do you value most? AI engineers would call these your "value function" or objective function.

The other is your Basic Assumptions about the world: Do you believe this field is "linearly incremental" or "heavy-tailed"? Do you believe in "compound interest" or "blockbusters"? This determines your search direction.

Position first, then unfolding. Without a position, there is no way to search for information.

Step 2: Algorithmic Search.

While the NFL theorem says no algorithm is universally superior, it allows for a "strongest algorithm" for a specific, local problem.

We need to find that algorithm. We should do research, perform analysis, and simulate future evolution. We search for the best options and consider the costs.

If everyone had maximum intelligence, the best algorithm would be determined by the value function (bias) you set in the first step. Every decision is a quest to maximize that value function under certain conditions.

At this stage, you must be extremely rational and objective, respecting the hard realities of the world. This is essentially an engineering problem. Most of the decision tools we will discuss later reside in this step.

Step 3: Systematic Adventuring.

You must admit you are taking a risk; you can’t just follow one path to the end. We talk about setting "bias," but we don’t mean "dogmatism." Bias is taking a position to get started; dogmatism is a fixation—holding onto your initial position even when reality tells you it’s wrong.

Bias is a sword; dogmatism is a shackle. Most people are either "bias-free" (indecisive, trying to be objective) or "fully dogmatic" (never changing course). High-level decision-makers are "Strong Bias, Weak Dogmatism": they set out with a bias, but if they find they’re on the wrong path, they immediately switch to a different bias.

Only then can you make decisions while remaining capable of adjusting or exiting them.

✵

Why is Apple’s design aesthetic so strong? Did other companies not think of it? The answer is that it was simply Steve Jobs’ personal aesthetic bias. You can make mass-market compatible PCs, or beautiful but expensive ones; you can have an open but chaotic ecosystem, or a closed but quality-assured one. There is no right or wrong here.

Warren Buffett practices value investing, holding a few stocks for years; Jim Simons of Renaissance Technologies uses quantitative trading, using algorithms to make decisions in milliseconds. Who is right? Both are—if they make money—they just chose different biases.

If something is both cheaper and better, everyone knows to choose it—that’s not a decision. Decisions often involve choosing between dilemmas: This job is stable but has a low ceiling; that one might make you rich but is volatile. Which do you choose?

There is no objective, neutral answer. Your decision ultimately depends on your bias.

✵

People love to talk about being objective, neutral, and open-minded, but the prerequisite for getting things done is being non-neutral, having a position, and having a direction. If you’re afraid to get your hands dirty, you’ll never be a player.

Judgment after setting values should be as objective as possible, but values themselves must be subjective. The No Free Lunch Theorem requires you to be an agent with a position. Have a position, but no fixation; have the right to intend, but also the right to adjust based on reality. Avoid "wanting everything"; use your core preferences to set your value function, sacrificing some dimensions to gain excess returns in others. That is what decision-making means.

In the AGI era, the core value of humans lies in setting bias: you decide which problems to solve, you define what is good or bad, and you draw the first stroke on a blank canvas.

Doing nothing is the only state that is truly perfect and unbiased. Bias is the proof of your vitality.

[Closing Poem]

In the sea of chaos, no wise or fool, Only causes and conditions converge. All laws are empty, with no fixed form, Where a thought arises, the true god appears.

Notes

[1] Wolpert, David H., and William G. Macready. 1997. “No Free Lunch Theorems for Optimization.” IEEE Transactions on Evolutionary Computation 1 (1): 67–82.

[2] Hume, David. 1748. An Enquiry Concerning Human Understanding.

[3] Mitchell, Tom M. 1980. “The Need for Biases in Learning Generalizations.” Technical Report, Rutgers University.

[4] Derrida, Jacques. 1992. “Force of Law: The ‘Mystical Foundation of Authority.’” In Deconstruction and the Possibility of Justice, edited by Drucilla Cornell, Michel Rosenfeld, and David Gray Carlson, 3–67. New York: Routledge.